Michal Kosinski has only just published his latest paper, but, later in the same day, the Stanford University psychologist and data scientist is already seeing criticism emerge on Twitter.

The article, “Psychological targeting as an effective approach to digital mass persuasion”, uses “real-world experiments”, in the form of Facebook adverts for genuine products that reached 3.5 million people, to show that micro-targeting users with messages tailored to their individual psychological profiles makes them more likely to click on ads and buy products. Similar research into online psychological persuasion might well go on, less transparently and at a much grander scale still, at the Silicon Valley tech companies just down the road from Stanford – not to mention in Russian troll factories. But the Twitterati’s censure is very much focused on Kosinski.

“I’m taking some flak because people [think that] because I showed that this psychological persuasion is effective, suddenly this [work] is the root of the problem,” he tells Times Higher Education in his Stanford office. Such critics, he adds, “don’t understand that telling people ‘Hey, the flu virus is deadly’ doesn’t make it deadly. The virus is objectively deadly, and I’m trying to warn you guys that it’s deadly.”

That Kosinski seems a little preoccupied about the reception of his paper is understandable given the whirlwinds set in motion by his previous research into how our intimate traits can be detected through the digital footprints we all leave behind. While at the University of Cambridge, the Polish-born academic – now assistant professor of organisational behaviour at Stanford Graduate Business School – was lead author on a 2013 paper that demonstrated that people’s Facebook likes could be used to “automatically and accurately predict a range of highly sensitive personal attributes”, including their personality traits, sexual orientation, intelligence and political views. That paper has led to his being described by some commentators as the man who inadvertently “enabled” the “digital revolution” set in motion by Cambridge Analytica, the political data-mining and strategic communications firm owned mostly by the US billionaire and conservative supporter Robert Mercer that was prominent in Donald Trump’s presidential election campaign and that has links to a data firm involved in the pro-Brexit campaign in the UK. The firm was in the headlines last weekend when The Observer alleged that it had made use of a large amount of Facebook data without users’ consent in a possible attempt to influence the 2016 US presidential election.

Then, in 2017, a paper co-authored by Kosinski showing that facial recognition technology could be used to detect individuals’ sexual orientation generated a huge backlash from gay rights groups. Ashland Johnson, director of public education and research at US LGBTQ rights organisation the Human Rights Campaign, condemned the paper, “Deep neural networks are more accurate than humans at detecting sexual orientation from facial images”, as “junk science” that had left the lives of millions “worse and less safe” because of its potential to be used to aid a “brutal regime’s efforts to identify and/or persecute people they believed to be gay”. Kosinski says that he was targeted with death threats in the aftermath of the paper’s publication, resulting in a campus police officer being stationed outside his door.

So is Kosinski really making bombs, as his critics claim? Or are his papers, as he argues, controlled explosions of weaponry already in use by others, and intended to advise us of its pressing dangers? Either way, his work in a cutting-edge field has made an extraordinary impact. It has relevance for all our lives by explaining how our digital footprints expose us to terrifying privacy risks, and it offers insights into the unequal yet significant power relationship between Silicon Valley and academia.

Kosinski was already deputy director of Cambridge’s Psychometrics Centre before he had even completed his PhD, in 2013. He had set out as a “traditional social psychologist trained not in computer science but in traditional small-sample research [and] questionnaire research”. But he became frustrated that the scientific establishment “refused to accept that the new reality…of the online environment at large has any significance”. Kosinski was also convinced that biases could be removed from recruiting processes for jobs and educational courses if applicants’ personalities were assessed via the evidence of their digital footprints rather than with the traditional psychometric tests that use a lengthy questionnaire to probe the “big five” personality traits: openness to experience, conscientiousness, extraversion, agreeableness and neuroticism (known collectively by the acronym Ocean).

Psychometrics – psychological measurement – is “such an important field, with potential to greatly ease the lives, especially, of underprivileged people and people who suffer from psychological ailments”, he adds.

David Stillwell, now in Kosinski’s former position at Cambridge, launched a Facebook app called MyPersonality in 2007. Users could take a traditional Ocean psychometric test but could also opt in to allow researchers to record their Facebook profile (including their likes), as an easily accessible and interpretable form of digital footprint. The app proved popular, and the database contains more than 6 million test results and 4 million Facebook profiles.

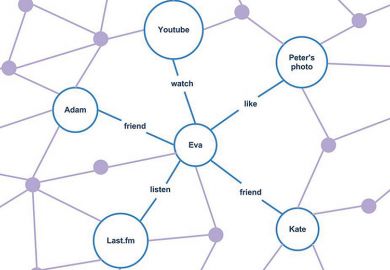

The resulting 2013 paper, “Private traits and attributes are predictable from digital records of human behaviour”, co-authored by Kosinski, Stillwell and Microsoft Research’s Thore Graepel, used a dataset of 58,000 volunteers who took psychometric tests and provided their Facebook likes and detailed demographic profiles. The findings were startling: for the openness trait, “observation of the user’s Likes is roughly as informative as using their personality test score” gathered from an Ocean questionnaire, the paper found. A detail as intimate as whether a subject’s parents stayed together or separated before they were 21 was detectable through their likes. The best predictors of high intelligence included likes for curly fries; low intelligence was indicated by likes including “I Love Being a Mom”. Similar personality predictions, the paper suggested, could probably be made using other forms of digital footprint, such as web searches, browsing histories and credit card purchases.

Altmetrics ranked the paper ninth in its top 100 of the academic papers that received the most attention online in 2013. Within weeks of its publication, Facebook changed users’ settings to make likes private by default.

A 2015 paper on which Kosinski was a co-author tested things further. It found that computers’ judgements of people’s personalities based on their Facebook likes were “more accurate and valid” than those made by their colleagues, friends, family and even spouses. The paper, “Computer-based personality judgments are more accurate than those made by humans”, showed that a “simple equation based on a random collection of around 200 likes from a Facebook profile can make those judgements and predictions [about personality] better than your own wife”, Kosinski says. “Which is completely mind-blowing because it’s a stupid equation based on 200 likes. A 16-year-old person probably leaves more than 200 digital footprints per hour.”

This “brings me to the conclusion that going forward…we are not going to have any privacy”, he adds.

Privacy is at the heart of concerns about the political applications of such knowledge. Alexander Nix, Cambridge Analytica’s CEO, explained in a 2016 presentation available on YouTube that “if you know the personality of the people you are targeting, you can nuance your messaging to resonate more effectively with these kinds of groups”. Nix also explained that Cambridge Analytica, then just starting its work on the Trump campaign, centred its method on the Ocean personality model. One of his examples of targeted marketing was a pro-gun rights advert tailored to a “neurotic and conscientious audience”, based on the image of a burglary.

The Guardian reported in 2014 that it had seen documents showing that “Cambridge Analytica’s parent, a London-based company called Strategic Communications Laboratories (SCL), was first introduced to the concept of using social media data to model human personality traits in early 2014 by Dr Aleksandr Kogan”, the psychology lecturer at Cambridge at the centre of last weekend’s revelations about the misuse of Facebook data.

Meanwhile, a 2017 report by Switzerland’s Das Magazin suggested that Kosinski was approached by Kogan “on behalf of a company that was interested in Kosinski’s method, and wanted to access the MyPersonality database. Kogan wasn’t at liberty to reveal for what purpose.” Kosinski ultimately broke off contact, according to the magazine, when Kogan finally revealed the company’s name and Kosinski discovered that one of its focuses was “influencing elections”.

Cambridge Analytica told Das Magazin that it “has had no dealings” with Kosinski and “does not use the same methodology” as he did. However, Cambridge Analytica’s methods are undeniably similar.

Kosinski says that the “main framing of the [2013] paper is privacy risks”. The paper’s conclusion refers to how predicting individuals’ attributes from their digital footprints might “improve numerous products and services”, but might also have “considerable negative implications, because it can easily be applied to large numbers of people without obtaining their individual consent and without their noticing”.

When Kosinski started working on the paper, he realised that he was “not the first person who figured it out”. Companies such as Netflix and Facebook, he says, were already using the capacities of digital footprints to reveal individual personality traits in more sophisticated ways. Barack Obama’s 2008 presidential election campaign had pioneered “psychological micro-targeting”, and governments were “tracking people online trying to figure out their underlying, intimate traits – trying to distinguish between a terrorist and a not-so-dangerous fanatic”.

Given this level of sophistication, adopting methods similar to those detailed in his 2013 paper would be “stupid”, in Kosinski’s view. Regarding Cambridge Analytica, he says: “The other thing that shows they were novices who didn’t know what they were doing…is that they actually talked about it. If you are in this business, you know that you do not tell people how you make the sausage.” Those who worked for Obama and Hillary Clinton had an “ability to micro-target…a few levels higher than whatever Cambridge Analytica could pull off. But they were smart enough to realise that you just don’t talk about it.”

But, for Kosinski, it is “great that Cambridge Analytica said those things [about its methods] because it brought to public awareness something that I had tried to make people aware of for years”. When he published his 2013 paper, “I would go out and say: ‘Look guys, there are risks for privacy.’ And people would be like: ‘Oh, but tell us about how curly fries predict intelligence.’” (Kosinski told The Daily Telegraph that he had “very little idea” about the answer to that last question, but speculated that “some clever people might say they liked quirky things to express their own novelty”.)

However, he feels that critics of Cambridge Analytica “worry about the wrong thing” and suggests that the firm is “not such a dangerous actor compared with what governments and institutions and more serious companies can do”.

Asked about the connections between his research, Cambridge Analytica, Trump and Brexit, Kosinski says: “I’m not trying to improve the bomb…I’m just saying: ‘I will, in my lab, detonate this bomb and show you how much damage it can do.’ I’m basically warning you against the negative impact of technologies that are already being used for the very particular purpose I’m warning against.”

The rationale for his paper showing that sexual orientation can be predicted from facial images “is exactly the same”, he continues. “The technology has been developed for many years: it’s being widely used for precisely the purpose [gay rights groups worried about]: detecting crime. That is what I’m warning against.”

His mention of crime is presumably a reference to the fact that – as his author’s note to the paper makes clear – homosexuality is illegal in some countries; the note also references a Wall Street Journal article about the use of facial recognition technology in China to track crime suspects. This use of technology seems rather distinct from profiling people’s intimate traits, although Kosinski also links to a Business Insider article about an Israeli start-up that claims to be able to predict how likely people are to be, for instance, terrorists or paedophiles by analysing their faces.

The paper, co-authored with Stanford colleague Yilun Wang, used deep neural networks – a type of artificial intelligence that “learns” in a way that mimics the human brain – to examine more than 35,000 facial images of self-identified gay and straight people, gathered from a dating website. An algorithm could correctly distinguish between gay and heterosexual men in 81 per cent of cases, and in 71 per cent of cases for women, the study found. The paper says that given that “companies and governments are increasingly using computer vision algorithms to detect people’s intimate traits, our findings expose a threat to the privacy and safety of gay men and women”.

Kosinski emphasises how much information humans can already read from faces. “Gender, age, ethnicity, genetic disease are all clearly displayed on the face, and we have no problem, even without any training, with judging those.” Yet humans “are not really great at [using facial information] when it comes to, say, sexual orientation or political views, but it seems that computers are”.

But in addition to the protests of gay rights groups – which also called on Stanford to distance itself from the research – The New York Times noted that “dozens of academics, scientists and others…picked apart the study in blog posts and Tweet storms”. In one exchange on Twitter , a mathematician told Kosinski: “What you call academic research, I call weaponised algorithms.”

This reaction was not entirely unexpected. “I sat on the sexual orientation paper for a year before I published it,” Kosinski admits. He “worried about the hate” and “about keeping my job”. If he had stayed quiet, his career would be “easier” and he would be facing fewer death threats. However, he eventually decided that “it’s morally inexcusable to keep this…knowledge away from people. Given that Russia, China, America, Germany and other countries are rolling things like this out, I went ahead and published it. But given the reaction that I got, none of my students will ever do a similar thing.”

If academia has sometimes been a hostile environment for Kosinski, a lucrative haven would surely await him in the tech industry; Stanford, after all, has been described as a “farm system for Silicon Valley”. However, “being in academia maximises my chances to have a positive impact on the world”, he says. “I would not be able to warn people against privacy risks if I worked at a company.”

On the brain drain to Silicon Valley, he admits that he “gave up trying to work with computer science students because they always leave me after three months because they’ve got a seven-digit sign-up bonus with one company or the other”. The drain happens “not only because of money” but also “because you can do projects in industry you cannot do in academia”, such as “playing with people’s newsfeeds, or playing with people’s experience on Google search”.

Given that it is “difficult to expect that academia will be able to compete with industry when it comes to funding for research and access to data”, Kosinski suggests that “we may have to accept that the societal function of educating people will shift from the universities to firms like Facebook. When you are graduating with your bachelor’s in computer science, you [then] go to Facebook. After three years [there], you have learned a lot – probably the equivalent of two master’s and a PhD.”

Kosinski also advocates that tech companies publish more academic journal articles, in order to “share the science they are producing within the walls of the company”. One notable case of a tech company doing just this is the 2014 PNAS paper by a member of Facebook’s core data science team and two Cornell University researchers, detailing the “emotional contagion” seen when individuals’ Facebook newsfeeds were manipulated by increasing the level of “positive” or “negative” stories. But, according to The Guardian, “lawyers, internet activists and politicians” reacted by describing the research as “‘scandalous’, ‘spooky’ and ‘disturbing’”.

“Guess what? [Facebook] will still be doing it,” says Kosinski. “They just won’t be telling anyone what they are doing. So we, as a society, lost a chance to learn, to have a discussion about potential policies. We just bullied them into silence.”

But is there more that could be done to incentivise young computer scientists to remain in academia? Kosinski argues that apart from “maybe trying to pay people better”, universities should allow researchers “to run research more freely” by relaxing privacy rules around data collection.

“We already have companies doing way more invasive research than whatever social scientists could ever do,” he says. “The only way for us to catch up as scientists – to try to tell the general public what…those companies might be doing behind closed doors – is [to be given] a bit more ability to run those studies and use those data.”

For all his concerns about privacy risks, Kosinski also seems, at times, to be something of a techno-utopian. On the wall of his office is an artwork that shows, in the background, a police officer in riot gear and gas mask, wreathed in tear gas. In the foreground, facing towards the officer and with its back to the viewer, is a figure wearing a western-style gunslinger’s belt. The figure’s hand is poised to pull out a weapon, but, rather than a pistol, it is the “f” from Facebook’s logo.

During Kosinski’s early years, Poland was still under the control of the Soviet Union. “Coming from a country that for 50 years was basically closed off from the rest of the world” showed him how “the attitudes of an entire country, or the established truth, can change in a matter of days or hours,” he says. Recalling that his first days at school were just after Poland’s first free elections in 1989, he remembers the headmaster coming into class and removing from the wall the white eagle on a red background that, deprived of its pre-communist crown, was the symbol of communist Poland. The poster the headmaster replaced it with had the crown restored. This sudden overturning of the “ideology that was being promoted to people” made Kosinski “really cautious when it comes to accepting well-established truths”, and he cites “echo chambers, information bubbles and fake news” as “potential myths we are perpetuating in society”.

In an on-stage interview last year at the Computer History Museum, near Palo Alto, Kosinski was questioned about the impact of personalised marketing in politics and whether it might ultimately break down “consensus reality” and democracy. He responded that the Soviet Union was an example of a country that achieved perfect “consensus reality” through propaganda. In a country like the US, he added, people’s “information bubbles” are larger than ever before in human history thanks to a combination of expanded social networks, journalism and algorithms that “try to give you a personalised view of the world”.

Kosinski’s latest paper shows the effectiveness of psychological targeting in influencing “the behaviour of large groups of people”. Taking up his flu metaphor again, Kosinski explains: “By warning people the flu virus is deadly, inevitably I’m also maybe giving some bad guys some bad ideas…But people forget that [most of] those guys already know that the flu virus is deadly,” he says. “They spend a lot of time and a lot of resources researching those things.

“I’m just one single computational social scientist working with very limited resources and a few students. Russia, the US and big corporations have buildings filled with people like me – much better paid, much better equipped, without any IRB [institutional review board] control, with much more data – studying not only how to improve people’s lives with those technologies but how to take advantage of people, how to affect their well‑being.”

No amount of legislative resistance can avert the coming “hurricane” around privacy, Kosinski believes. But he hopes that his forecasting, however badly it may be received, will ultimately help to minimise the storm’s destructive power. “The sooner we start getting ready for this unpleasant future,” he says, “the better protected we are going to be.”

POSTSCRIPT:

Print headline: Privacy investigator

Register to continue

Why register?

- Registration is free and only takes a moment

- Once registered, you can read 3 articles a month

- Sign up for our newsletter

Subscribe

Or subscribe for unlimited access to:

- Unlimited access to news, views, insights & reviews

- Digital editions

- Digital access to THE’s university and college rankings analysis

Already registered or a current subscriber?