Earlier this month, a viral Substack post by political scientist Alexander Kustov issued a blunt challenge to his fellow academics: wake up on AI.

Kustov, an associate professor at the University of Notre Dame, argued that AI can already produce publishable social science research and that most faculty opposition to the technology is “status protection dressed up as principle”. The piece ricocheted across academic social media, generating the predictable mix of enthusiastic agreement and defensive outrage. Both reactions missed something important.

Kustov is largely right about the disruption coming for academic research and publishing. But his focus is squarely on how professors produce research. More urgent but less examined is the question of what universities are supposed to be developing in their students – and whether they still know how to do it in an era when AI can generate a passable essay in seconds. That question has a more uncomfortable answer than most AI optimists are willing to give.

Ask a roomful of faculty members what they think of “AI-assisted” student work and the overwhelming reaction is that it is mediocre. Formulaic. Hollow. The kind of writing that fulfils the technical requirements of an assignment while signalling, to any trained reader, that no genuine thinking occurred. They’re not wrong to notice this. However, they’re wrong to draw the conclusion they typically do.

The anti-AI academic consensus has hardened into something close to doctrine: that AI produces “slop” and universities that tolerate it are abetting the degradation of intellectual life. Experienced faculty can spot work created with and without genuine student effort immediately, and their frustration with being drowned in slop is legitimate.

But frustration is not analysis, and the faculty consensus has confused the symptom with the disease. AI does not produce mediocre work. Mediocre thinkers produce mediocre work. AI just lets them do it faster and at higher volume. That is a real problem – but it is a problem with the operator, not the tool.

AI is already highly capable. It has passed the bar exam by a comfortable margin, outscored medical students on complex clinical reasoning exams and has a diagnostic accuracy comparable to non-expert physicians across a wide range of conditions. Whatever one thinks of AI aesthetically or pedagogically, the claim that it inherently produces “slop” is simply not defensible.

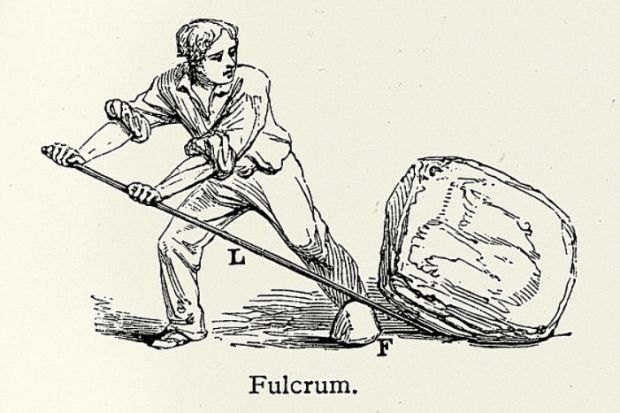

Imagine AI as a force multiplier. It will genuinely improve the writing quality and speed of a mediocre student. But that writing will still be below average because the mediocre student cannot recognise the gap between what AI gave them and what excellent output actually looks like. And it is important to note that they were unable to evaluate the quality of their own argument before AI existed either: removing the tool would not develop that judgement.

Now place an expert in front of the same tool: a senior scholar, a seasoned journalist, an experienced attorney. AI will not write at their level unprompted. But that is not how experts use tools. They prompt with precision. They provide intellectual architecture. They specify the argument, the structure, the things the output cannot get wrong. AI drafts; they direct. Then they edit, interrogate, revise, effectively coaching the output up to their standard – which is something only they can do because recognising that standard requires expertise they have spent years building. The result is work produced at their level of quality but with greater velocity. The multiplier is real and, for the expert, genuinely powerful.

Research on AI in the workplace bears out this asymmetry, showing that novices gain productivity from AI, performing at levels that previously required significantly more experience. But novice gains in productivity are not the same as gains in quality.

Kustov’s own piece inadvertently illustrates the point, too. He revealed in a postscript that the article was generated by agentic AI working from his social media posts and notes. The output was sharp and persuasive precisely because Kustov is an expert. The ideas were his, the judgements were his, the decade of domain knowledge was his. The AI executed. That is not a diminishment of the piece: it is the clearest possible demonstration of the multiplier in action. A novice feeding the same tool their half-formed social media posts would not have produced that article.

The right response to AI for higher education is therefore to redouble our commitment to developing the capacities that make the multiplier powerful. This is, or should be, the core purpose of a liberal arts education.

The case for liberal arts as the model for AI-era education is not new. Universities that produce generalists who can think across disciplines and navigate novel problems are far better positioned for a world where AI handles routine cognitive tasks. But the AI mediocrity debate adds a sharper edge to that argument. If AI amplifies what you bring to it, then the liberal arts mission of developing critical thinkers, not just credentialed producers, becomes not a nostalgic aspiration but a practical necessity. The student who arrives at the workforce with deep expertise in how to think and judge will wield AI with power. The student who arrives with surface knowledge and borrowed competence will be undone by it.

This does not mean AI belongs everywhere in the university. It doesn’t. There are courses and assignments where the struggle to think without a scaffold, to write badly before writing well, is precisely the point. Developing expertise requires doing the cognitive work that builds it. AI multiplication only works if there is something to multiply.

Some assignments should therefore be AI-free by design. Others should actively engage AI, teaching students to evaluate, direct and improve its outputs – a sophisticated intellectual skill that requires genuine domain knowledge.

AI does not make expertise obsolete. It makes expertise matter more. The gap between what a novice and an expert can produce with AI is wider than the gap between what they could produce without it. The task of universities is to produce people who are on the right side of that gap.

That is not a technology problem. It is a mission problem. And it is entirely ours to solve.

Nicholas B. Creel is associate professor of business law at Georgia College & State University.

Register to continue

Why register?

- Registration is free and only takes a moment

- Once registered, you can read 3 articles a month

- Sign up for our newsletter

Subscribe

Or subscribe for unlimited access to:

- Unlimited access to news, views, insights & reviews

- Digital editions

- Digital access to THE’s university and college rankings analysis

Already registered or a current subscriber?