Universities UK has just published its characteristically even-handed and rigorous analysis of the 83 responses that it received to its recent survey of institutional views of the recent and potential future iterations of the teaching excellence framework. It makes an interesting read.

It appears to show that the TEF was a relatively cheap exercise – estimating that the 134 universities that entered spent just over £4 million on preparing their submissions. Coming in at less than £30,000 per institution, the TEF seems pretty good value to me. Some 73 per cent of respondents believe that it will raise the profile of teaching and learning in universities, and 81 per cent had already undertaken additional investment in teaching and learning, with almost half saying that the TEF had influenced their decision to do so.

However, the most eye-catching figure is the one that concerns whether the TEF will accurately assess teaching and learning. Just 2 per cent responded positively, and 73 per cent disagreed. This dismissal is hard to reconcile with the 40 per cent or so of institutions telling UUK that they had committed resources to improving teaching and learning as a consequence of the TEF. It is even more puzzling when UUK asked for priorities for its future development. New metrics for teaching excellence scored highly. Fair enough, but what are these preferable metrics that will accurately assess teaching and learning?

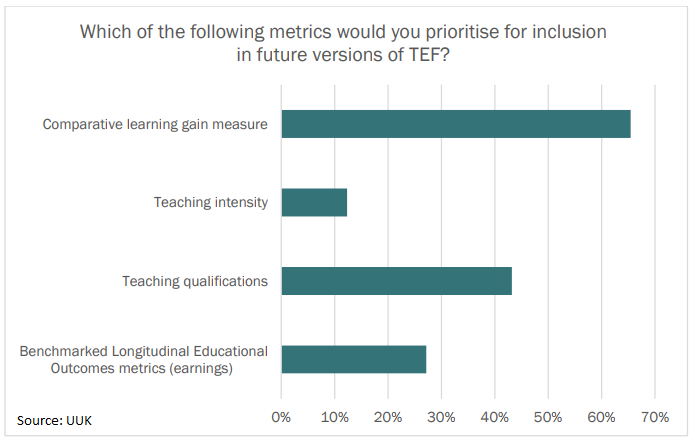

The most popular, according to the survey, is the development of a comparative learning gain measure. If the current metrics are controversial, then all I have seen about this potential new approach suggests that it will be no less so.

One of the problems with the sector’s hostility to the current metrics is that it has encouraged the government to pursue creating a measure of teaching intensity for the next iteration. While the first set did not consist of perfect proxies, at least they got close to measuring outcomes.

Introducing an input measure is a retrograde step. Not least when it is a combination of a self-declared number of contact hours weighted by student-to-staff ratio, and a new student survey that assesses student perceptions of contact hours, self-directed study and whether they consider together they meet their learning needs.

Welcome, then, to the “gross teaching quotient”, an unholy mix of one of the most gameable of the current league table categories, and another survey of students that this time will ask them what they think about the level of input they received. What this will tell anybody about teaching quality is opaque to me.

Just over 10 per cent of the 83 institutions in the UUK survey would prioritise this metric in future versions of the TEF. I would be amazed that more respondents prefer this metric to the original group if I did not suspect the institutional self-interest that may have informed some of these answers.

There is also little enthusiasm for a subject-level TEF. Once again, I find myself returning to the mainstream of higher education opinion here. Not because it can’t be done, because it can, and not because it would be too expensive, because in relation to the investment being made by students overall it would be marginal. My objection is that I do not believe that future students would find it useful, any more than they do the Key Information Set (KIS) data.

Politicians – like economists – might wish on occasion that customers were more rational and evidence-based in their choices. But they are not. There are lots of reasons that students might choose to come to Nottingham Trent University, for example, but I would lay good money that the gross teaching quotient for a particular course will rarely be one of them.

Edward Peck is vice-chancellor of Nottingham Trent University.

Register to continue

Why register?

- Registration is free and only takes a moment

- Once registered, you can read 3 articles a month

- Sign up for our newsletter

Subscribe

Or subscribe for unlimited access to:

- Unlimited access to news, views, insights & reviews

- Digital editions

- Digital access to THE’s university and college rankings analysis

Already registered or a current subscriber?