Universities must monitor the impact on student stress and staff workload as they shift away from “high-stakes” exams and towards using technology to conduct “continuous” assessment, a report says.

A paper published by Jisc, UK higher education’s main technology body, says digital tools offer “a host of opportunities for students to capture and reflect on evidence of their learning, to use and share formative feedback and to record progress”, adding that it “may be more effective to assess learners continually throughout their course instead of through a final exam”.

The Future of Assessment predicts that learning analytics systems – which track student performance and engagement with educational materials – “might make some ‘stop and test’ assessment points redundant”, and claims that annual assessment cycles “might be replaced by assessment on demand, whereby students can evidence their learning when they feel ready”.

However, the report warns that there is “a danger that continual, low-level assessment may prove to be more stressful for students”. And while automation of assessment may reduce workload for staff, it says, some lecturers “may also be prepared to experience a small increase in workload in order to transition to a better continual assessment-focused approach that can provide a more authentic assessment experience and put less stress on students”.

Andy McGregor, Jisc’s director of edtech, said the trend would need to be watched closely.

“Technology has to be implemented carefully so students don’t feel they are constantly being monitored or constantly being assessed, and academics don’t feel they constantly have to set assessments and are constantly having to mark,” he said. “I think technology offers solutions there, but it also offers risks; that’s why technology is never the answer on its own.

“[We also need] good assessment, good education design [and] good pedagogy. If all of those things are thought about, then technology can be used well.”

The Jisc report calls for UK universities to move faster to embed technology into assessment or face “being rapidly left behind”. It observes that although Newcastle University is one of the UK’s leaders in this field, conducting about one in 10 exams digitally, several institutions in the Netherlands and Norway are close to 100 per cent.

Digital evaluation will help to address the “growing disconnect” between how students are assessed and the value they can take from it, according to the report, which says continuous assessment would teach students to learn and adapt rather than simply absorb information.

Mr McGregor highlighted innovations such as online writing coaches, which help students learn by practising, and peer-to-peer assessment tools, which help students learn by asking questions and posting comments.

The report says technology can help to create more “authentic” assessments – for example, developing a website – and universities need to strike a balance between the use of artificial intelligence tools that offer increasingly sophisticated instant feedback and human marking.

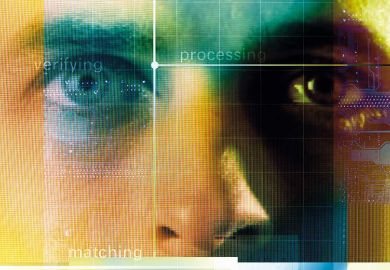

The report also calls on universities to adopt authorship detection and biometric authentication tools to prevent cheating.

Register to continue

Why register?

- Registration is free and only takes a moment

- Once registered, you can read 3 articles a month

- Sign up for our newsletter

Subscribe

Or subscribe for unlimited access to:

- Unlimited access to news, views, insights & reviews

- Digital editions

- Digital access to THE’s university and college rankings analysis

Already registered or a current subscriber?