Welcome to our new monthly rankings blog where I will be discussing aspects of our World University Rankings and how these might change in the years ahead.

As I have previously announced, we will be launching a new methodology, called WUR 3.0, at our World Academic Summit at the University of Toronto in September, with a view to publishing a new version of the rankings in the second half of 2021. This blog will explore some of the new metrics that we are considering for inclusion in this next iteration of the rankings.

This month I’m going to focus on our research environment pillar, which currently covers three different areas:

- Reputation

- Income

- Productivity

Reputation is gathered through our annual survey of active academics, which each year receives responses from more than 10,000 researchers geographically stratified across the world.

Research income assesses the ability of a university to attract money to support their research. It is normalised by the number of researchers and is balanced using purchasing power parity (PPP).

Productivity is defined as the number of publications (including papers, conference proceedings, books, book chapters and reviews) generated, normalised by the number of academics at the university. We also normalise this measure by subject, taking care to ensure that we account for papers in subjects where a university doesn’t report any academics.

All these are sensible measures that relate to research and, when combined with assessments of the quality of research (citation impact), provide a useful component of university evaluation.

But there is another element that we might want to consider.

One approach that is repeatedly cited as a feature of excellence in research is cross-disciplinary work. For the purposes of this blog I will define this as work that involves researchers from more than a single academic discipline. It might also be called interdisciplinary or multidisciplinary research.

Of course, this work is already measured, to a degree, in the metrics described above. However, the bibliometrics used in the World University Rankings are based on a hierarchical subject classification, which ultimately derives from a western European view of the relationships between subjects. While we might not describe subjects as belonging to philosophy or natural philosophy in the 21st century, this still shapes our discussion.

In addition, almost all bibliometric suppliers started with a focus on the sciences, and we typically see those subjects as being more strongly developed, both in terms of content and in terms of the granularity of subject divisions. Within the World University Rankings we use Elsevier’s “All Science Journal Classification (AJSC)” coding system, but that isn’t the only one that exists. Most countries have their own system for coding disciplines: Classification of Instructional Programs (CIP) codes in the US; Australian and New Zealand Standard Industrial Classification (ANZIC) codes in Australasia; and Higher Education Classification of Subjects (HECoS), which will soon replace the Joint Academic Coding System, in the UK.

So, is there room for a more formal measure of cross-disciplinary work? What might we need to consider if we were to move ahead with this? And can we foresee any unintended consequences?

A key consideration is how we would define cross- or multidisciplinary work. As I mentioned earlier, the fineness of disciplines in the current hierarchies are far from equal. This results in more apparent interdisciplinary work in some parts of the academic spectrum than in others.

This brings us to a related challenge: not all multidisciplinary work is equally unusual (although we might ask if unusual is necessarily “good”).

A useful indicator was provided by my colleague Billy Wong – an analysis of how the performance of universities (in this case our traditional bibliometric measure, field-weighted citation impact) correlates across different subjects (with a high positive correlation signalling that the institution is performing well in both fields). There’s no surprise that we see computer science and engineering correlate strongly, or that we see that farming and fine arts have a negative correlation.

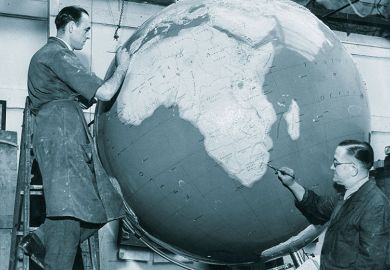

Table showing the correlation between citation performance by subject for universities included in the THE World University Rankings

Source: THE World University Rankings

How might we account for this? We could, potentially, discount for closeness using a matrix similar to the chart above to reward collaborations between far-apart disciplines more than collaborations between similar fields. But is that reasonable?

Alternatively, we could measure how well universities perform on topics that are naturally cross-discipline, such as climate change or gender equality. Our work on the United Nations’ Sustainable Development Goals through the Times Higher Education University Impact Rankings does exactly this; our ranking on SDG 13 Climate Action, for instance, looks at university research from a wide range of disciplines, from atmospheric science to ecology and the social sciences. But which topics should be chosen? The decision itself could be a very contentious one.

Do you have ideas about how we can improve our rankings? Send suggestions and questions to us at profilerankings@timeshighereducation.com

Duncan Ross is chief data officer at Times Higher Education.

Register to continue

Why register?

- Registration is free and only takes a moment

- Once registered, you can read 3 articles a month

- Sign up for our newsletter

Subscribe

Or subscribe for unlimited access to:

- Unlimited access to news, views, insights & reviews

- Digital editions

- Digital access to THE’s university and college rankings analysis

Already registered or a current subscriber?