Germany, France and Japan have joined forces to fund research into “human-centred” artificial intelligence that aims to respect privacy and transparency, in the latest sign of a global split with the US and China over the ethics of AI.

The three countries’ funding agencies have put out a joint call for research proposals, backed by an initial €7.4 million (£6.8 million). They stressed that they “share the same values” and warned that the technology has the potential to “violate individual privacy and right to informational self-determination”.

Observers see the move as part of a wider divergence in AI research priorities, with Europe, plus Japan and potentially Canada, taking the lead on its ethical development.

“We share the same beliefs and the same standards,” said Susanne Sangenstedt, a programme officer at the German Research Foundation who is helping to oversee the collaboration.

The joint call has been under development since last year, she explained. Last November, the German Centres for Research and Innovation, a global network of universities and companies, organised an AI symposium in Japan involving ethicists and social scientists as well as more technically minded academics.

Results should, if possible, be released on an open access basis, said Dr Sangenstedt. The funding call asks academics to pitch projects on the “democratisation” of AI, the “integrity of data for fairness”, and “AI ethics to avoid gender/age segmentation”, as well as in areas such as machine learning, computer vision and data mining.

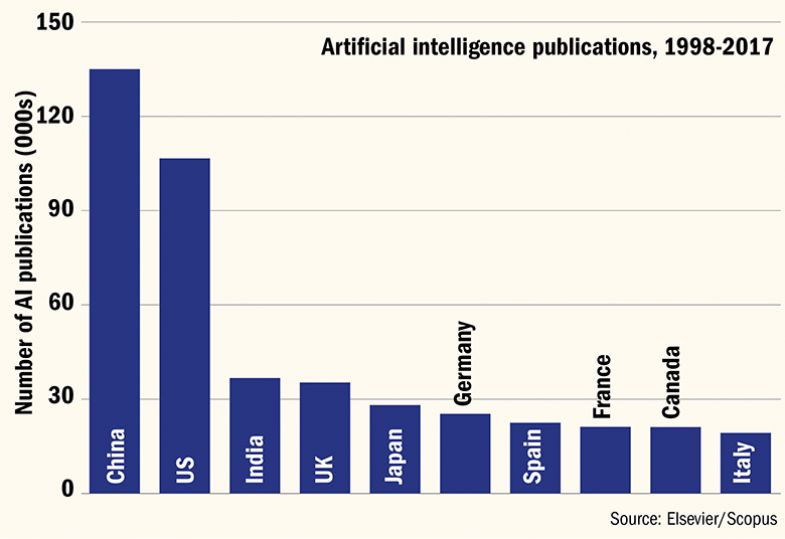

China and US lead on AI papers

Germany, France and Japan are more concerned than some of their rivals that “if you let this [AI] go wild, it can cause profound damage to society”, said Holger Hoos, professor of machine learning at Leiden University. He said that he expected Canada to join the trio soon.

“AI is a game of critical mass. Japan can’t compete with China on AI, so they need allies. And the same goes for Canada,” he said.

China’s approach to AI was to put its development under the control of the “government-state”, he said. Meanwhile, the US – which has less of a developed national AI strategy than most other major economies – has allowed AI development to be dominated by private technology companies, argued Professor Hoos, one of the founders of the Confederation of Laboratories for Artificial Intelligence Research in Europe, which is pushing for the continent to remain competitive in AI research while leading on ethical, legal and social issues.

The “European way” was an attempt to find a “balance” between “government, industry and individual”, he said, an approach Japan supported too.

Countries from Finland to India, plus the European Union, have devised AI strategies in the past few years, responding to predictions that the technology will upend the economy and society, for example displacing jobs, allowing algorithm-based sentencing for criminals, and even unleashing “killer robots”.

This new alliance between Germany, France and Japan was “quite a logical and natural expansion of the EU’s position on AI”, explained Sophie-Charlotte Fischer, a researcher on AI and international relations at ETH Zurich.

By establishing itself as a world leader in “ethical” AI, the EU hoped to set standards for the rest of the world: “They have selected this as their niche,” she explained.

France’s AI strategy has called for the creation of interdisciplinary institutes, involving social scientists and philosophers. The German strategy, released last year, established a plethora of observatories, dialogues and councils to make sure AI “serves the good of society”.

Japan has also used its presidency of the G20 group of nations to push for a common, global body to overseas the development of AI, Ms Fischer added.

It was “unfair”, however, to say that China – which in 2017 launched its own strategy, aiming to lead the world by 2030 – was not thinking about ethics, she argued. In May earlier this year, universities and companies signed up to the Beijing AI principles, which commit to “privacy, dignity, freedom, independence and rights”.

Whether China’s authoritarian government would heed these principles was “hard to tell”, she acknowledged, but “as a signal it’s quite noteworthy” and may indicate that Beijing was “open to dialogue about how AI is used”.

Still, “one advantage the EU has is that it’s a credible actor. It’s harder to believe when China puts these principles forward,” Ms Fischer added.

For now, the joint funding from Germany, France and Japan was a pilot, explained Dr Sangenstedt, “but possibly it will be the starting point for a discussion about regular calls”.

POSTSCRIPT:

Print headline: New alliance amplifies global split on AI ethics

Register to continue

Why register?

- Registration is free and only takes a moment

- Once registered, you can read 3 articles a month

- Sign up for our newsletter

Subscribe

Or subscribe for unlimited access to:

- Unlimited access to news, views, insights & reviews

- Digital editions

- Digital access to THE’s university and college rankings analysis

Already registered or a current subscriber?