With the final decision on the rules for the 2021 research excellence framework having been announced towards the end of last year, anxiety levels are beginning to ramp up again. And women could be forgiven for feeling particularly nervous.

A recent study led by Friederike Mengel of the University of Essex found that, on average, and all with other things being equal, university student evaluations gave female instructors a mark 37 points below those of men on a 100-point scale. Meanwhile, in the assessment of student essays, sexism and racism abound, which is why almost all universities use anonymous marking. Consciously or not, academics often introduce biases: we look at the name of the writer and give higher ratings to males and lower marks to those with ethnic minority names. Does it not seem odd, then, that papers submitted for peer review by the REF panel are not similarly anonymised?

To their credit, the funding bodies openly acknowledge the issue of bias. Their Initial Decisions on the Research Excellence Framework 2021, published in September, state that “there will be mandatory, bespoke equality and diversity briefings and mandatory unconscious bias training provided for panellists involved in selection decisions (the main and sub-panel chairs)”. This shows willingness but these are weak solutions for two reasons: first, most REF panel members will not receive the training and, second, changing attitudes is not the same as changing behaviour, particularly when the bias is unconscious.

The REF review process contains other safeguards. Panellists are trained to promote consistency in their ratings. Each paper is reviewed by two panellists – one a subject specialist and one a generalist. Their marks are then discussed by the whole subpanel. These measures promote accountability and may reduce some types of bias.

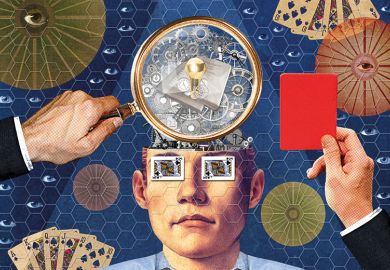

However, in addition to sexism and racism there are at least three other possible biases in REF reviews, arising out of the fact that author, institution and journal names are all made known. Panellists are human and humans use heuristics (rules of thumb), particularly when there is pressure and a time constraint. Bias occurs when panellists, consciously or unconsciously, rate any aspect of the scholar, their institution or the journal, rather than the paper itself. It is easy to envisage a scenario in which a poor paper from a renowned scholar, or one from a renowned university, is marked more highly than its merit justifies – or a brilliant paper from an unknown scholar or less renowned university is undervalued. Similarly, a brilliant paper in a lower-tier journal might well be unfairly marked down, while a mediocre paper in a higher-tier journal is unduly rewarded. Journals have their own biases – editors nudge them in their own direction, encouraging authors and papers they like – and the REF should not reinforce them. At minimum, there are likely to be elements of confirmation bias and authority bias in REF reviews.

Some commentators may say that using heuristics is acceptable, even useful. But it would defeat the purpose of the REF: if we can use metrics of scholarly impact (as has been suggested), such as journal impact factor, then there is no need for review by REF panellists. Most ironically, if a study’s quality can be inferred from the institutional affiliation of the author, then the REF is a circular exercise because it aims to gauge institutional quality.

There is no perfect way to reduce bias, but student-style anonymous assessment is the least bad one. Removing author names, institutional affiliations and journal titles can probably be automated. If the software doesn’t already exist, writing it could become a student computer science project, or it could be put out to tender. Failing that, low-tech options would be to make universities anonymise their submissions beforehand, or for the REF team to do it before distributing the submissions to panellists. The cost would be a drop in the ocean – and would still be worth it even if it weren’t.

But wouldn’t reviewers know who wrote the papers anyway? Sometimes the subject specialist may know or suspect, but probably not often, so it is an additional layer of protection. Nor do I think that, if they were explicitly told not to, panellists would just google the study’s title to find out. It is a very different thing to deliberately subvert the REF process than to do so subconsciously – plus, it would take time and effort, and would risk censure.

I suspect that anonymous marking would prove popular among REF reviewers – or, at least, those who do not consciously use the heuristic devices discussed above. Speaking for myself, I much prefer to mark student papers that are anonymous, not just because it is fairer but also because the students know that it is.

Graham Farrell is professor of international and comparative crime science at the University of Leeds.

Register to continue

Why register?

- Registration is free and only takes a moment

- Once registered, you can read 3 articles a month

- Sign up for our newsletter

Subscribe

Or subscribe for unlimited access to:

- Unlimited access to news, views, insights & reviews

- Digital editions

- Digital access to THE’s university and college rankings analysis

Already registered or a current subscriber?