I have a pet theory about the modern workplace. It seems to me that there are two types of people, or at least two types of job. The first have to fit meetings in around whatever it is that they do for a living, while for the second, having meetings is the job. The means seem to have become the end.

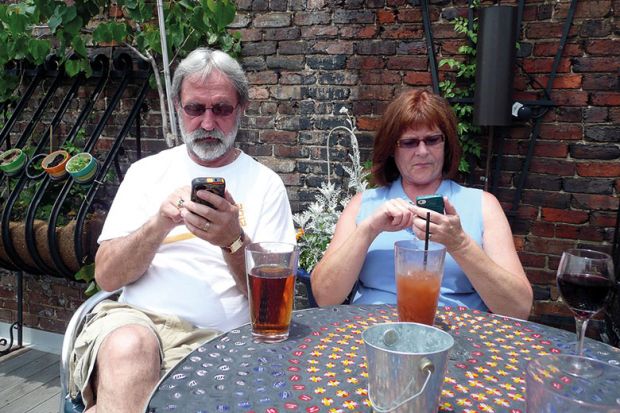

I wonder if something similar is happening with social media. This, after all, was supposed to be a tool to enhance one’s life – not replace it. Too often, it now also appears to be an end in itself.

You will have a view on whether this holds true in the personal lives of those around you (for younger members of the family, just replace Facebook with Fortnite). But it is also worth considering in the context of the workplace – particularly in professions that require a public profile.

People who work in universities, whether in academic roles or otherwise, should be available, open and influential, and social media seems – or seemed – to be the ideal way to achieve those things.

It is easy, it doesn’t cost anything other than time (more on this later), and it quickly hooks users on likes and retweets, virtue signalling or trolling, depending on their disposition.

You may detect a note of cynicism here. Roll back 10 years, and the hope was that social media would be a panacea for the ills of human division and misunderstanding, and for better or worse it has delivered on its promise to bypass traditional gatekeepers of information and communication.

In our news pages, we interview the Australian Nobel laureate Peter Doherty, who is scathing about the stranglehold that traditional media once had, and who strongly advocates direct communication online between academics and members of the public.

But a decade on from the start of the social media revolution, you could also make a case that it has served up a self-indulgent, polarising mirage. The cliché casts it as an echo chamber that makes us feel as though we are doing our job, winning the arguments and improving the world, when in fact we’re just spending vast swathes of time talking to small, selective audiences, or fuelling pointless bunfights, while confusing an illusion of impact with the real thing.

This jaded view is behind the growing disquiet about our social media age, which we explore in a higher education context in our cover story this week.

Perhaps most striking is the sheer amount of time that this human-human/human-troll/human-bot interaction is sucking out of people’s personal and professional lives.

Don’t misunderstand me: social media is brilliant for all sorts of things and it would be hard to imagine life without it.

But – to use my own profession as an example – you only have to look at the proportion of news stories that amount to “X said Y on Twitter” to see how dominant the medium has become (the US president is a Twitter troll for goodness’ sake) – and how little it often amounts to.

As for academia, you will have your own views on how genuine the impact of hours, days, weeks and months spent on Twitter actually is, how much it enhances your professional life and prospects, and what the opportunity cost may be.

The extent to which these channels are solving or exacerbating higher education’s own echo chamber, its standing with the real public rather than the Twitterati, and its core missions of teaching and research, is worthy of serious reflection (something Twitter itself is notoriously bad at).

Is social media improving or distracting from more meaningful forms of engagement, collaboration and scholarly output? No doubt there are as many answers as there are individuals – that’s the point of social media, it is a tool to be used as you see fit. And, to repeat, this is not to deny the undoubted value that it can bring to our personal and professional lives when it is used effectively.

But who has not occasionally had a moment of realisation or horror, when another hour or three has disappeared into the social black hole, and thought: how did it come to this?

POSTSCRIPT:

Print headline: Busy with your social life?

Register to continue

Why register?

- Registration is free and only takes a moment

- Once registered, you can read 3 articles a month

- Sign up for our newsletter

Subscribe

Or subscribe for unlimited access to:

- Unlimited access to news, views, insights & reviews

- Digital editions

- Digital access to THE’s university and college rankings analysis

Already registered or a current subscriber?