Two conferences were held in England in 1996 on the topic defined by the interchangeable titles of these books: one, under the aegis of the Royal Institute of Philosophy, at the University of Reading, the other at the University of Sheffield Hang Seng Centre for Cognitive Studies. The 12 chapters of John Preston's book, and the 14 chapters of Peter Carruthers and Jill Boucher's book, form the proceedings of the Reading and the Sheffield conferences respectively. As such, they will be of interest chiefly to professional academics and graduate students working on philosophy of mind or the cognitive sciences.

Nominally, the primary orientation of Preston's book is to the discipline of philosophy, while the Carruthers and Boucher book describes itself as interdisciplinary, but this contrast is more apparent than real. The fact is that anyone discussing the relationship between thought and language intelligently at the end of the 20th century must draw on a mixture of concepts and findings borrowed from philosophy, psychology, linguistics and perhaps computing; and all these writers do.

The question that runs through most of their contributions might be expressed as follows. Is language mainly a device for interpersonal communication, used to lend concrete expression to thoughts that occur within individuals independently of language; or are languages the vehicles of thought, so that individuals' internal lives would be dependent on language whether or not the individuals communicated with one another?

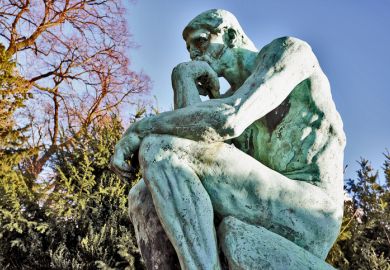

The question does not sound like the kind that can be answered definitively by one conference (or even two), and the contributors do not claim to settle it. If there is anything on which most of them agree, it is perhaps that they tend to see both thought and language as bound up with interaction (whether minds interacting with other minds, or humans interacting with their environment more generally). The image epitomised by Rodin's Penseur , of a solitary individual silently and motionlessly thinking, is given short shrift by most contributors to these volumes. By implication they see it as atypical and misleading.

While these books cannot be said to resolve the question about the dependence of thought on language, this does not mean that they merely rehash standard positions and leave us no further forward. They introduce plenty of empirical evidence that is highly relevant and not generally known. This is true of the Royal Institute of Philosophy volume, as well as the "interdisciplinary" one. One of the most interesting contributions is the chapter in the former by psychologist Lawrence Weiskrantz, who analyses intelligent behaviour in, for instance, pre-verbal infants and monkeys. Rosemary Varley, in the Carruthers and Boucher book, discusses experiments with an aphasic patient, which seem to establish that propositional reasoning cannot require the ability to formulate propositions explicitly.

Although the various contributions to the two books do not line up neatly as "philosophy" vs "cognitive science", in the way that the conference backgrounds might suggest, they do group into two strikingly distinct categories. The difference is one that I have often noticed in recent years, and it seems to be a generational one. Not knowing the ages of many of these authors, I cannot check how well the generalisation applies to them; but there does seem to be a characteristic difference between the writing of academics born before and after the mid-century. Older writers of course learned their craft by studying the work of others, and they take for granted that their readers share much of that background; but, when they offer their own contributions, they found their case on their own power of argument. They strive to make the intellectual weather, as it were. Not all can succeed, but this is what they see as the aim, and what they did when they were young themselves: Gilbert Ryle wrote as self-confidently in his early thirties as he did later in The Concept of Mind , for instance. Younger writers, by contrast, seem frequently to be glancing over their shoulder, anxious to defer to possessors of some authority to which they themselves do not aspire.

Sometimes the authority belongs to one of the branches of the political thought-police who infest 1990s public life. For instance, it is difficult to imagine any other explanation for Stephen Laurence's hasty conclusion, in the Carruthers and Boucher book, that language cannot be a cultural artefact because "there is no known correlation between the existence or complexity of language with cultural development, though we would expect there would be if language were a cultural artefact". The point would need more discussion even if it were true, but Laurence's premiss is simply false: for instance, lexical complexity correlates with cultural development, not just with respect to words for sophisticated technology but with respect to core vocabulary. Brent Berlin and Paul Kay had no motive to stress this point when they wrote Basic Color Terms in 1969 (they wanted to claim that vocabulary was largely innate rather than culturally determined), but their data forced them to recognise that it is primitive societies that have few basic colour terms. However, the thought-police of the race relations industry do not like such things to be mentioned, so younger academics train their minds not to stray down such avenues. One cannot know whether this is what lies behind Laurence's conclusion, but it is difficult to see what else could justify so brief and glib a treatment of such a crucial issue.

More often, the deference is expressed through writers orienting their own ideas around the writings of some acknowledged academic "star", rather than letting them stand on their own merits. Of course, where a classic publication genuinely has redefined the terms of subsequent debate it is appropriate to quote it. But rare cases of that type are presumably as likely to emerge from, say, New Zealand or India, as from anywhere; philosophical brilliance is not sensitive to national boundaries. The modern academic star system does not work that way; those deferred to always come from the highest-prestige institutions in the world's richest country, because that is where the authority to confer star status is seen as residing.

Many of the contributors to these volumes, for instance (including the editors of both of them) treat Jerry Fodor's 1975 book The Language of Thought as "canonical". Now Fodor (who was not a participant at either conference) may have produced good work in other publications (I am not familiar with his oeuvre as a whole); but it is hard to see The Language of Thought as a book that ought to be heavily influencing the subject more than 20 years after its publication. It propounded a very odd idea - that utterances in ordinary languages such as English must be translations of thoughts couched in a different, innate language, later called "Mentalese" - and it defended this idea with arguments whose inadequacy was blatant. Most people would surely suppose that, if we can be said to think in a language at all, this is likely to be the language that we speak; but according to Fodor in 1975, "The obvious (and, I should have thought, sufficient) refutation of the claim that natural languages are the medium of thought is that there are nonverbal organisms that think."

How could that refutation possibly be sufficient? We may well agree that some non-human animals do some thinking; for instance, Weiskrantz comments about one of the monkey experiments he describes, "if you doubt that monkeys think in this situation, humans in the same situation certainly say they do!" But surely most people suppose that human thought is qualitatively richer than the thought of non-verbal organisms; if so, it seems reasonable to suggest that this is (in part, at least) because we have languages such as English to think in. A position much like this is well argued by Andy Clark in the Carruthers and Boucher volume, but it is strange to find the authority of Fodor's book treated as making Clark's position an extreme, almost quixotic one.

One notable manifestation of the new deferentialism is that, although most contributors to these volumes are British, both books bring in an American to provide the summing-up chapter. In fact they use the same American, Daniel Dennett; and, while the titles of his contributions to the respective books are quite different, much of their content is word-for-word identical (and apparently identical to material Dennett had published before), with the insertion of brief nods in the direction of some of the speakers at these conferences. An air is created of the district commissioner handing out metaphorical pats on the head to one group of locals, before climbing wearily back into the LandRover and heading off to give his standard speech at the next location deeper in the bush.

Each of these books contains a mixture of contributions in what I have described as older and younger styles. But the individual chapters are easy to assign to one style or the other, and the Preston volume contains a higher proportion of "older" and fewer "younger" contributions than the Carruthers and Boucher volume. For that reason, the former strikes me as, on balance, more valuable. Deference has a valid role in the political life of a nation (ironically, the arena in which British academics are least inclined to accord it). Intellectual life, on the other hand, is a republic of equals or it is nothing.

Geoffrey Sampson is reader in computer science and artificial intelligence, University of Sussex.

The Language of Thought

Editor - John Preston

ISBN - 0 521 58741 7

Publisher - Cambridge University Press

Price - £15.95

Pages - 249

Register to continue

Why register?

- Registration is free and only takes a moment

- Once registered, you can read 3 articles a month

- Sign up for our newsletter

Subscribe

Or subscribe for unlimited access to:

- Unlimited access to news, views, insights & reviews

- Digital editions

- Digital access to THE’s university and college rankings analysis

Already registered or a current subscriber?