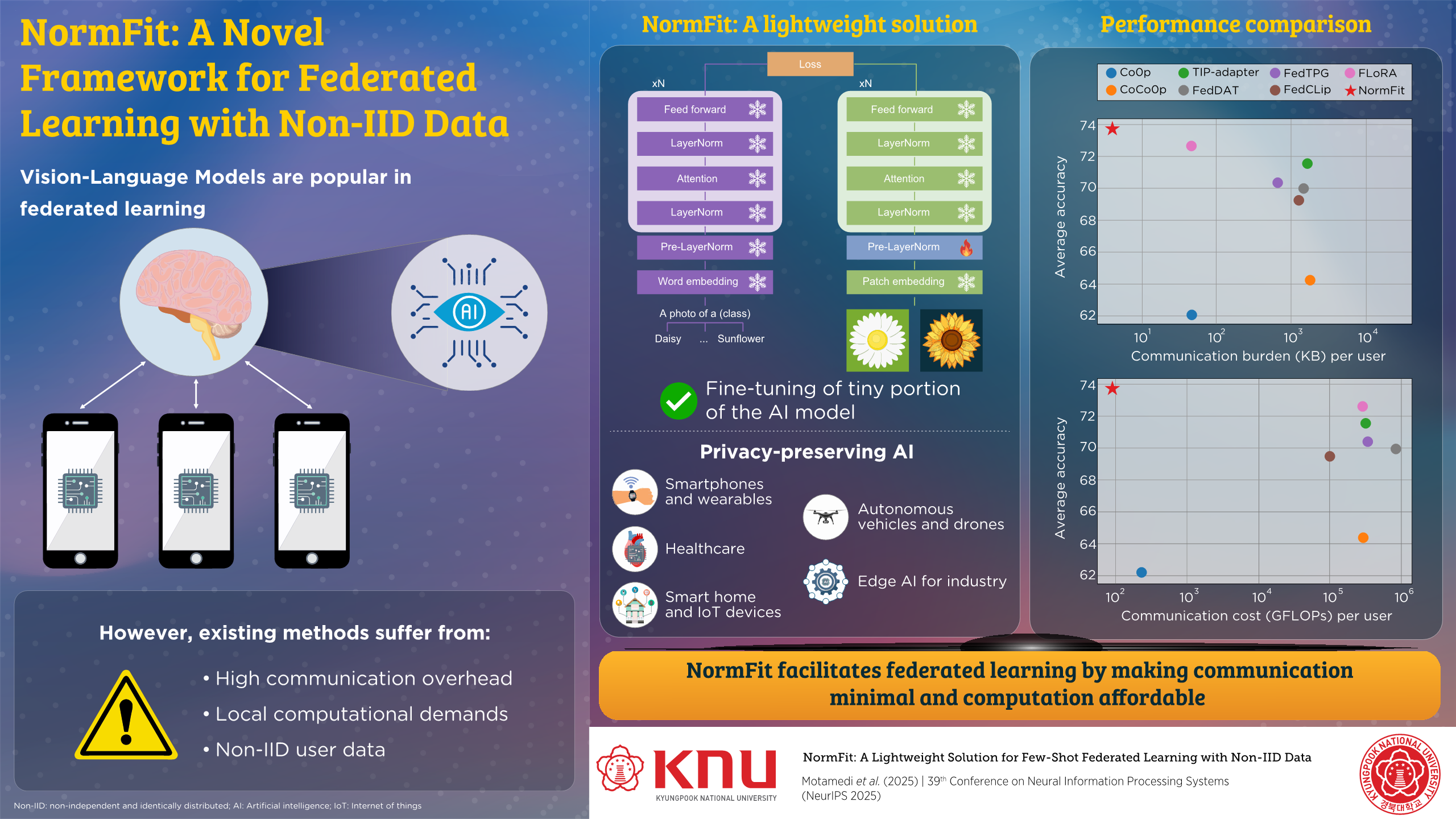

Proposing NormFit Framework for Federated Learning with Non-IID Data

The lightweight solution fine-tunes a tiny portion of model parameters while still achieving remarkably high accuracy.

Sponsored by

Sponsored by

Vision-Language Models are ubiquitous in Federated Learning. Unfortunately, existing techniques face challenges due to communication overhead, computational demands, and non-IID user data. Scientists of Kyungpook National University and Queen’s University have recently proposed NormFit, a lightweight solution that selectively fine-tunes only a very tiny portion of AI model parameters to achieve high accuracy at low communication cost. This innovation has the potential to revolutionize AI deployment in everyday technology.

In recent years, Vision–Language Models (VLMs) have gained immense popularity in Federated Learning, a promising approach to decentralized machine learning, because of their strong and reliable performance. In particular, few-shot adaptation with pre-trained VLMs such as Contrastive Language–Image Pretraining significantly improves the accuracy of downstream tasks. Despite these advances, existing approaches still face major limitations, including heavy communication overhead, high local computational costs, and poor performance when user data are non-independent and identically distributed (non-IID).

In a new innovation, a team of researchers from South Korea and Canada, involving Associate Professor Jae-Mo Kang from the Department of Artificial Intelligence at Kyungpook National University, has addressed all of these challenges at once. Their findings were presented at the 39th Conference on Neural Information Processing Systems (NeurIPS 2025).

The study introduces NormFit, a lightweight solution designed for few-shot Federated Learning under non-IID data conditions. The framework makes AI training substantially lighter, faster, and more accessible, particularly for devices with limited computing resources. Rather than updating millions of parameters, NormFit fine-tunes only a tiny fraction of an existing model—less than 0.001% of total parameters—while still delivering higher accuracy and far lower communication costs than prior methods.

Dr. Kang explains, “We found a way to make advanced AI models learn efficiently even when data comes from many different people and devices—without needing heavy hardware or expensive data transfers.”

NormFit is specifically designed for Federated Learning, where data remain on personal devices instead of being sent to a central server. This enables privacy-preserving AI across a wide range of applications, including smartphones and wearables, healthcare systems, smart homes and Internet-of-Things devices, autonomous vehicles and drones, and industrial Edge AI.

With NormFit, features such as photo classification, personalized assistants, or activity recognition can adapt using private user data without draining batteries or requiring large downloads. In healthcare, hospitals and clinics can collaboratively train models on medical images or patient records without sharing raw data, improving diagnostic tools while maintaining confidentiality.

The approach also benefits connected environments. Cameras, sensors, and home appliances can adapt to local conditions while sending only lightweight updates, reducing network congestion and improving responsiveness. Vehicles can learn from their surroundings and share minimal updates, supporting safer navigation without transmitting massive sensor streams. In factories, robots and inspection systems can collaborate to enhance defect detection and predictive maintenance with far lower communication demands.

The team believe NormFit-style lightweight learning will reshape how AI is deployed in everyday technology. “We envision that more AI models will learn directly on people’s devices—phones, cars, appliances—without ever exposing personal data. This will increase public trust and reduce privacy violations. Additionally, by reducing computation by orders of magnitude, such methods lower energy use across millions of devices. This can drastically cut the environmental footprint of AI training,” highlights Dr. Kang.

Lightweight fine-tuning lowers barriers for smaller organizations, enabling AI without large servers. As devices grow smarter, Federated Learning will underpin distributed AI, with NormFit keeping communication minimal and computation affordable.

Reference

Title of original paper

NormFit: A Lightweight Solution for Few-Shot Federated Learning with Non-IID Data

Journal

39th Conference on Neural Information Processing Systems (NeurIPS)

DOI

N/A

About Kyungpook National University

Kyungpook National University (KNU) is a national university located in Daegu, South Korea. Founded in 1946, it is committed to becoming a leading global university based on its proud and lasting tradition of truth, pride, and service. As a comprehensive national university representing the regions of Daegu and Gyeongbuk Province, KNU has been striving to lead Korea’s national and international development by fostering talented graduates who can serve as global community leaders.

Website: https://en.knu.ac.kr/main/main.htm

About the author

Prof. Jae-Mo Kang received the PhD degree in electrical engineering from KAIST in 2017. He completed postdoctoral training in the Department of Electrical and Computer Engineering at Queen’s University and served as an Assistant Professor at Sejong University. He is an Associate Professor in the Department of Artificial Intelligence at Kyungpook National University, South Korea. His research focuses on deep learning, generative AI including VLMs and LLMs, multimodal AI, trustworthy AI, and computer vision. He is ranked among the world’s top two percent scientists by Elsevier and won first prize in the SoccerNet Dense Video Captioning Challenge at CVPR 2024.