Proposing Distorting Embedding Space for Sexual Content Generation Prevention

The innovative technology can help curb digital sex crimes, secure Intellectual Property, and facilitate AI integration.

Sponsored by

Sponsored by

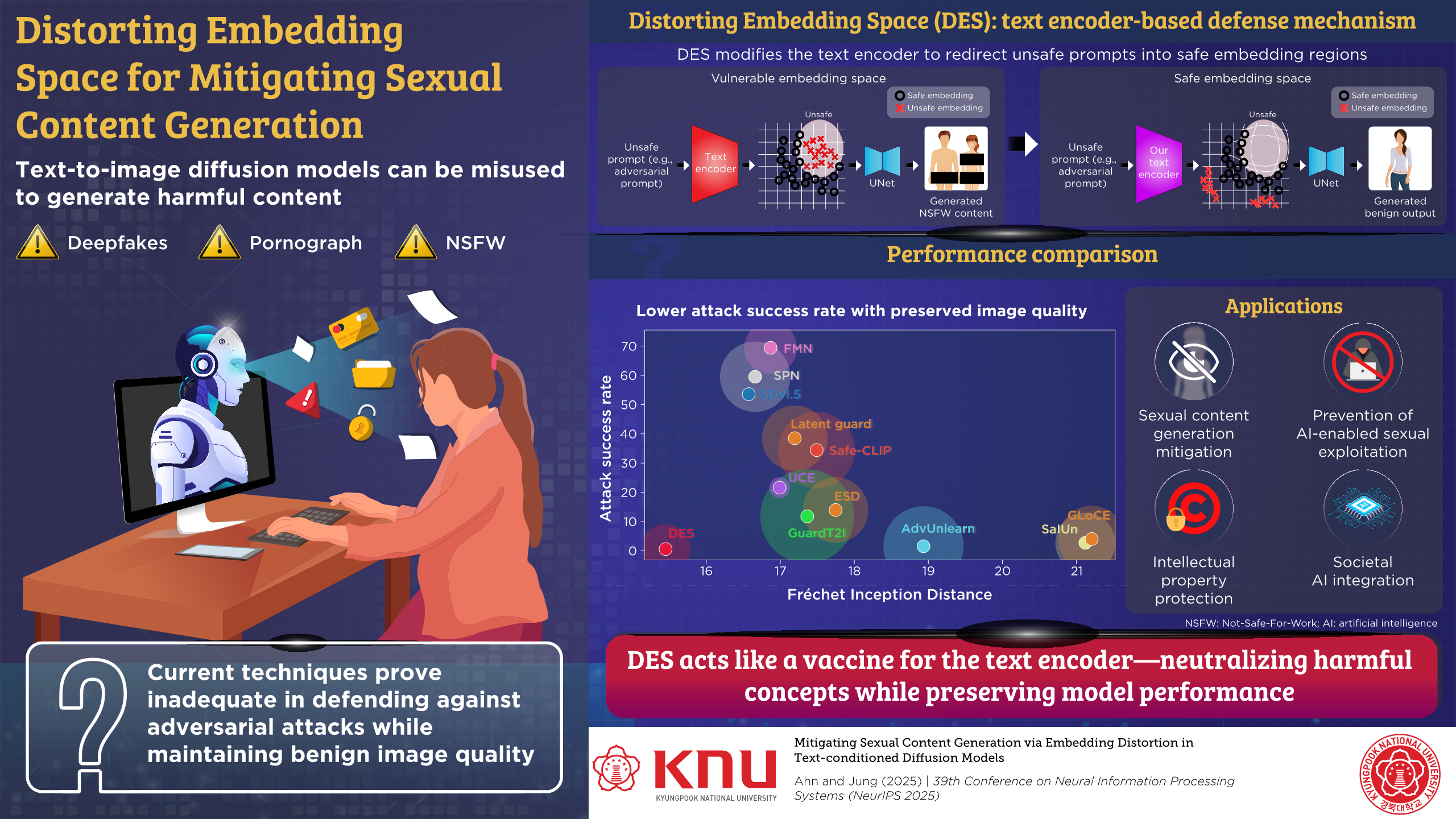

Diffusion models demonstrate remarkable image generation performance, but risk generating sexual content. Addressing this issue, researchers from Kyungpook National University have proposed Distorting Embedding Space, a text encoder-based defense mechanism that effectively neutralizes harmful concepts like sexual content and violence at the embedding level. This technology is expected to help prevent digital sex crimes, act as a shield for Intellectual Property, and smoothen AI integration.

Diffusion models exhibit excellent image generation performance via text prompts. However, they can be easily misused to generate sexual content. Current approaches, including prompt filtering, concept removal, and sexual content mitigation, prove inadequate in defending against adversarial attacks while maintaining benign image quality.

Recently, PhD student Jaesin Ahn and Associate Professor Heechul Jung from Kyungpook National University have proposed a novel defense method: Distorting Embedding Space (DES). Their findings have been presented at the 39th Conference on Neural Information Processing Systems (NeurIPS 2025).

DES fundamentally changes how AI safety is approached by editing the model's "knowledge" rather than just filtering its inputs or outputs. Previous methods worked like a keyword blacklist or damaged the model's overall creativity to ensure safety. In contrast, DES acts like a vaccine for the text encoder. It effectively neutralizes harmful concepts, such as sexual content or violence, at the embedding level while preserving the model’s ability to generate high-quality, benign images.

It is particularly noteworthy that DES successfully defends against adversarial attacks, where malicious users try to trick the AI with uninterpretable prompts to bypass safety filters. Furthermore, this robust defense is achieved with incredible efficiency, requiring only about 90 seconds of training and zero additional time during the actual image generation process. This combination of high security, preserved image quality, and efficiency sets the present research apart from existing solutions.

The most immediate and critical application is the prevention of digital sex crimes, such as the creation of non-consensual deepfakes or pornographic content using generative AI. By integrating DES into popular text-to-image services or open-source models, platforms can proactively stop users from generating harmful images of specific individuals, even if the users attempt to bypass restrictions with adversarial prompts.

Crucially, this technology serves as a powerful shield for Intellectual Property (IP). “A major issue with generative AI is that it often reproduces copyrighted concepts without permission. Our method, DES, addresses this by protecting not just specific characters or art styles, but a wide variety of visual concepts. For instance, it can effectively block the unauthorized generation of famous figures like Pikachu or SpongeBob, as well as the signature visual look of major franchises like Studio Ghibli. This empowers creators and companies to protect their brands and earnings in the AI era,” highlights Mr. Ahn.

Additionally, since DES does not slow down the image generation process at all, it is perfect for real-time mobile apps where speed and efficiency are essential.

According to Dr. Jung: “In 5 to 10 years, generative AI will likely be an integral part of education, entertainment, and creative industries. Our research contributes to a future where "Safe-by-Design" AI is the standard, profoundly affecting daily life in various ways.”

The present work will reshape the digital environment for families and educators. As AI models internalize ethical judgments to filter out harmful content at the source, parents and teachers will be able to let children use creative AI tools freely without the constant fear of exposure to inappropriate or traumatic material.

The innovation safeguards human creativity by building copyright awareness directly into AI. Artists and IP holders can confidently coexist with technology—knowing AI amplifies creative work rather than exploiting it. The result is a future where people trust AI’s benefits without fear of deepfakes or intellectual property theft.

Reference

Title of original paper

Mitigating Sexual Content Generation via Embedding Distortion in Text-conditioned Diffusion Models

Conference

39th Conference on Neural Information Processing Systems (NeurIPS 2025)

Link

https://neurips.cc/virtual/2025/loc/san-diego/poster/120328

About Kyungpook National University

Kyungpook National University (KNU) is a national university located in Daegu, South Korea. Founded in 1946, it is committed to becoming a leading global university based on its proud and lasting tradition of truth, pride, and service. As a comprehensive national university representing the regions of Daegu and Gyeongbuk Province, KNU has been striving to lead Korea’s national and international development by fostering talented graduates who can serve as global community leaders.

Website: https://en.knu.ac.kr/main/main.htm

About the authors

Mr. Jaesin Ahn received the M.S. degree in the School of Electronic and Electrical Engineering from Kyungpook National University, Daegu, South Korea, in 2021. He is currently pursuing the Ph.D. degree in Department of Artificial Intelligence from Kyungpook National University, Daegu, South Korea. His research interests include deep learning and computer vision.

Dr. Heechul Jung received the Ph.D. degree in electrical engineering from the Korea Advanced Institute of Science and Technology (KAIST), in 2018. He is currently an Associate Professor at the Department of Artificial Intelligence, Kyungpook National University, South Korea. His research interests include deep learning and computer vision.