The recent fall from grace of the Cornell University food marketing researcher Brian Wansink is very revealing of the state of play in modern research.

Wansink had for years embodied the ideal to which all academics aspire: innovative, highly cited and media-friendly. But, in September, Cornell found against him on a range of academic misconduct charges, including “misreporting of research data, problematic statistical techniques, failure to properly document and preserve research results, and inappropriate authorship”. Wansink tendered his resignation.

His research, now criticised as fatally flawed, included studies suggesting that people who go grocery shopping while hungry buy more calories, that pre-ordering lunch can help you choose healthier food, and that serving people out of large bowls leads them to eat larger portions.

Such studies have been cited more than 20,000 times and even led to an appearance on The Oprah Winfrey Show. But Wansink was accused of manipulating his data to achieve more striking results. Underlying it all is a suspicion that he was in the habit of forming hypotheses and then searching for data to support them. Yet, from a more generous perspective, this is, after all, only scientific method.

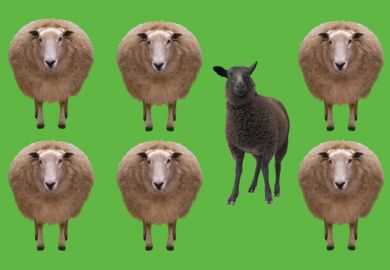

Behind the criticism of Wansink is a much broader critique not only of his work but of a certain kind of study: one that, while it might have quantitative elements, is in essence ethnographic and qualitative, its chief value being in storytelling and interpretation. The quantitative “errors” spotted in such multifaceted research studies are ammunition in what is really a much broader metaphysical battle.

We forget too easily that the history of science is rich with errors. In a dash to claim glory before Watson and Crick, Linus Pauling published a fundamentally incoherent hypothesis that the structure of DNA was a triple helix. Lord Kelvin misestimated the age of the Earth by more than an order of magnitude. In the early days of genetics, Francis Galton introduced an erroneous mathematical expression for the contributions of different ancestors to individuals’ inherited traits. We forget because these errors were part of broader narratives that came with brilliant insights.

I accept that Wansink may have been guilty of shoehorning data into preconceived patterns – and in the process may have mixed up some of the figures too. But if the latter is unforgivable, the former is surely research as normal. The critics are indulging themselves in a myth of neutral observers uncovering “facts”, which rests on a view of knowledge as pristine and eternal as anything Plato might have dreamed of.

It is thanks to Western philosophy that, for thousands of years, we have believed that our thinking should strive to eliminate ideas that are vague, contradictory or ambiguous. Today’s orthodoxy is that the world is governed by iron laws, the most important of which is if P then Q. Part and parcel of this is a belief that the main goal of science is to provide deterministic – cause and effect – rules for all phenomena. All experiments rely on correlations, predictability and replicability.

And then there’s the law of non-contradiction. This was first formulated by Parmenides but Plato also refers to it in the Sophist, phrasing it as: “Never shall this be proved: that things that are not, are.”

However, whatever today’s notions of research validity, the fact is that Parmenides’ law of non-contradiction in itself is far from uncontroversial. Indeed, it represented a radical break from the Ionian philosophy of nature that preceded it. This older philosophy was based on observation and experience in the ordinary sense.

The difference in approach resulted from a disagreement about what the ancients believed were the proper objects of thought: the complexities of the messy real world or abstractions from a pristine but purely theoretical one. And today this remains an issue at the heart of broad swathes of research, expressed and fought over in terms of logic and statistical validity.

Plato attempted to avoid contradictions by isolating the object of inquiry from all other relationships. But, in doing so, he abstracted and divorced those objects from a reality that is multi-relational and multitemporal. This same artificiality dogs much research.

Mathematicians and meteorologists have been grudgingly working towards acceptance of this at least since the work of chaos theory pioneer Edward Lorenz in the 1960s. Economists and biologists, too, now work with chaotic systems, in which prediction of effects is physically and logically impossible.

But what is less appreciated is that science has never really been about predicting. As the computer scientist Noson Yanofsky puts it in his 2004 book The Outer Limits of Science: “What is important in science and what makes science significant is explanation and understanding.”

Even if the quantitative elements don't convince and need revising, studies like Wansink’s can be of value if they offer new clarity in looking at phenomena, and stimulate ideas for future investigations. Such understanding should be the researcher’s Holy Grail.

After all, according to the tenets of our current approach to facts and figures, much scientific endeavour of the past amounted to wasted effort, in fields with absolutely no yield of true scientific information. And yet science has progressed.

Martin Cohen is visiting research fellow in philosophy at the University of Hertfordshire. His latest book, I Think Therefore I Eat, on food science, is published by Turner.

POSTSCRIPT:

Print headline: There are worse sins

Register to continue

Why register?

- Registration is free and only takes a moment

- Once registered, you can read 3 articles a month

- Sign up for our newsletter

Subscribe

Or subscribe for unlimited access to:

- Unlimited access to news, views, insights & reviews

- Digital editions

- Digital access to THE’s university and college rankings analysis

Already registered or a current subscriber?