Last week's Budget surprise has stirred panic and split opinion among v-cs. The Times Higher investigates how the future of funding could pan out

Farewell RAE, welcome RAM. A few words from the Chancellor last week seemed to consign the research assessment exercise to the bin. But how different will a research appraisal system that replaces the views of academic referees with measures of research quality be? And what impact will "research assessment metrics" have on institutions?

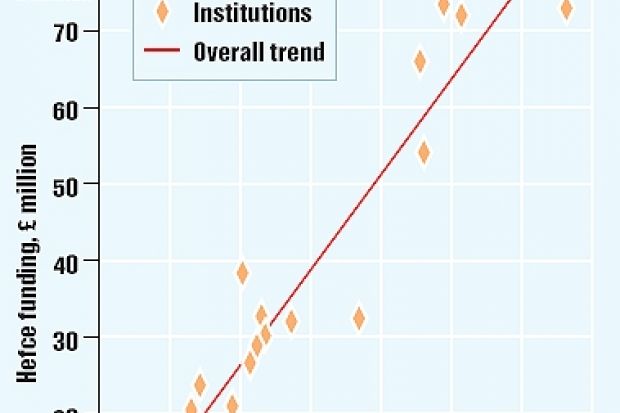

Using income from research council grants as a proxy for block research grants from the funding councils - as demonstrated in a graph published in the Government's next steps report - is a seductively simple but crude way of replacing the RAE system.

The impact on different universities would be surprising and dramatic. But this will depend on precisely what is included in the model. There's more than one way of using the data.

Consider a simple model that follows another approach being considered by the Treasury: allocating block research grants on the basis of total grant earned by institutions. We have compared this with the mainstream block grants as currently allocated.

As the table above shows, under such a system Imperial College London, King's College London and the London School of Hygiene would be among the biggest winners; Manchester and Reading universities, Royal Holloway, University of London, and the London School of Economics would be the biggest losers.

In practice, the system would be much more complex than last week's announcement suggests. It will be essential, first, to consider two key components in the current system. The RAE provides a metric: the grade for a unit of assessment or subject area. This unit and the number of research staff determine the sum of money allocated to institutions.

In the new system, how will the metrics be defined and then used to calculate funding? This is a difficult issue because no single metric can be applied to all types of research or all types of institution.

A version of the Treasury's graph reproduced above shows a strong correlation between research council funding and grants allocated on the back of RAE results at institutional level. But the graph is really an aggregate of the graphs for science, engineering, social science and the humanities, so it obscures variations for these subject areas.

Under any new system, a range of measures needs to be tracked - some relating to funding inputs, others to training and activity of staff, with others capturing research outputs and outcomes. Outputs based on bibliometrics - statistics on citations and numbers of research papers - work well for core science and can work well in engineering. But they are disputed across economic and social sciences and have little to say about arts and humanities. Finally, the cycle of assessment has to be set.

Metrics could allow continuous - or at least annual - recalibration. It might be better to opt for a two-tier system of light-touch or "dipstick" metrics that gauge if performance has changed much relative to some benchmark.

A more intensive audit using a wider range of metrics, perhaps with some peer review, could be used when big changes are detected and might occur every three or four years. A two-tier metrics-based system could promote better research management in universities, building on the work achieved for the RAE.

Jonathan Adams, director, Evidence Ltd.

Register to continue

Why register?

- Registration is free and only takes a moment

- Once registered, you can read 3 articles a month

- Sign up for our newsletter

Subscribe

Or subscribe for unlimited access to:

- Unlimited access to news, views, insights & reviews

- Digital editions

- Digital access to THE’s university and college rankings analysis

Already registered or a current subscriber? Login