What makes a world-class university? When Times Higher Education asked the leaders of top-ranked institutions this question last year, one response stood out for its inspirational qualities.

Robert Zimmer, president of the University of Chicago, said that his institution was "driven by a singular focus on the value of open, rigorous and intense inquiry. Everything about the university that we recognise as distinctive flows from this."

He said that Chicago believed that "argumentation rather than deference is the route to clarity", that "arguments stand or fall on their merits" and that the university recognised that "our contributions to society rest on the power of our ideas and the openness of our environment to developing and testing ideas".

His answer prompted much praise. One Times Higher Education reader said that Zimmer's "glorious affirmation" was "marvellously refreshing" and had "brought joy to my heart, tears to my eyes and a renewed sense of commitment to the life of the mind".

But glorious as Zimmer's statement was, it also served to highlight the problem faced by the increasing number of people and organisations now in the business of ranking higher education institutions: how on earth do you measure such intangible things?

The short answer, of course, is that you cannot. What you can do, however, and what we have sought to do with these rankings, is to try to capture the more tangible and measurable elements that make a modern, world-class university.

When Times Higher Education first conceived its annual World University Rankings with QS in 2004, we identified "four pillars" that supported the foundations of a leading international institution. They are hardly controversial: high-quality research; high-quality teaching; high graduate employability; and an "international outlook".

Much more controversial are the measurements we chose for our rankings, and the balance between quantitative and qualitative measures.

To judge research excellence, we examine citations - how many times an academic's published work is cited.

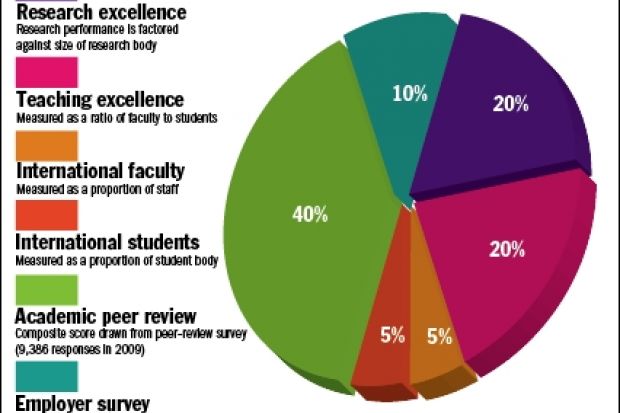

We calculate this element - worth 20 per cent of the overall score - by taking the total number of citations for all papers published from the institution, and then dividing the figure by the number of full-time equivalent staff at the institution. This gives a sense of the density of research excellence on a campus.

Our proxy for teaching excellence is a simple measure of staff-to-student ratio. It is not perfect, but it is based on data that can be collected for all institutions, often via national bodies, and compared fairly. Our assumption is that it tells us something meaningful about the quality of the student experience. At the most basic level, it at least gives a sense as to whether an institution has enough teaching staff to give students the attention they require. This measure is worth 20 per cent of the overall score.

To get a sense of a university's international outlook, we measure the proportion of overseas staff a university has on its books (making up 5 per cent of the total score) and the proportion of international students it has attracted (making up another 5 per cent). This gives an impression of how attractive an institution is around the world, and suggests how much the institution has embraced the globalisation agenda.

But 50 per cent of the final score is made up from qualitative data from surveys of informed people - university academics and graduate employers.

The fundamental tenet of this ranking, as we have said in previous years, is that academics know best when it comes to identifying the best institutions.

So the biggest part of the ranking score - worth 40 per cent - is based on the result of an academic peer review survey. We consult academics around the world, from lecturers to university presidents, and ask them to name up to 30 institutions they regard as being the best in the world in their field.

Responses over the past three years are aggregated, although only the most recent response from anyone who has responded more than once is used. For our 2009 tables, we have drawn on responses from 9,386 people. With each person nominating an average of 13 institutions, this means that we can draw on about 120,000 data points.

The ranking also includes the results of an employer survey of 3,281 major graduate employers, making up 10 per cent of the overall result.

• Times Higher Education-QS World University Rankings 2009: full coverage and tables

THE SCORECARD

Something to talk about

For a university to be considered for the ranking, it must operate in at least two of five major academic fields: natural sciences; life sciences and biomedicine; engineering and information technology; social sciences; and arts and humanities. It must also teach undergraduates, so many specialist schools are excluded.

We do not pretend to be able to capture all of the intangible nuances of what it is that makes a university so special, and we accept that there are some criticisms of our methodology.

These rankings are meant to be the starting point for discussions about institutions' places in the rapidly globalising world - and how that is measured and benchmarked - not the end point. We encourage that discussion.

Register to continue

Why register?

- Registration is free and only takes a moment

- Once registered, you can read 3 articles a month

- Sign up for our newsletter

Subscribe

Or subscribe for unlimited access to:

- Unlimited access to news, views, insights & reviews

- Digital editions

- Digital access to THE’s university and college rankings analysis

Already registered or a current subscriber?