Adapt, evolve, elevate: ChatGPT is calling for interdisciplinary action

You may also like

It is 2023. Every sector has embraced artificial intelligence (AI) and its ever-expanding applications. While its ramifications are unclear – and should continue to form the basis of deep human interrogation – AI increasingly permeates almost every aspect of our lives. In light of this, it is a little embarrassing that the release of ChatGPT has caused such an uproar in higher education.

Across institutions of “higher” learning, the collective response to ChatGPT is being hindered by a misplaced focus on implications for academic integrity. Can students use AI tools to “cheat”? Maybe. Should we be assessing university and college students on work so trivial that a beta-version large language model can readily earn a high grade? No.

- ChatGPT as a teaching tool, not a cheating tool

- So, you want to use ChatGPT in the classroom this semester?

- ChatGPT has arrived – and nothing has changed

Rather than monitoring computer activity or limiting computer access (which feels rather like taking a “tinfoil hat” approach), we should be leveraging the potential of AI in teaching and research. We must rapidly adapt our approach to assessment and prepare ourselves to learn and work with AI as it evolves. Ultimately, we need to elevate our thinking and harness expertise across disciplines to confront inherent biases, marginalisation of some voices and issues of privilege and access.

Step 1: Adapt

ChatGPT is one harbinger of a massively exciting technological shift, and we must move quickly to leverage its potential. Getting started is easy: if you haven’t already, create a free account and play with it. A little experimentation quickly reveals how ChatGPT can be used in your discipline and classroom – as well as its limitations. My starting point was to ask Chat (as I’ll affectionately refer to it) my test questions in 300- and 400-level functional anatomy.

Chat generally performed abysmally. Good performance put up an immediate flag: is that question based on lower-order thinking skills or recall? Do I really want to test my students on that? If so, why? Even in foundational courses, we need to ask ourselves these questions. We are training students to work as though they have continual access to AI. Because they will.

For many, 10 minutes on ChatGPT will alleviate concerns about its abuse. It produces text, code, arguments and solutions that seem plausible at first glance but are often inaccurate. For teaching, this is a gold mine: how often do we need to generate inherently flawed examples for our students to critique? Now we can do it live, in the classroom, in 30 seconds. Where are the errors in this piece of code? Did Chat capture all the crucial information when it summarised this abstract in accessible, non-specialist language?

It's worth bearing in mind that these limitations will likely be short-lived: it will improve rapidly, and it will be replaced by newer and better technology (including ChatGPT-4). In the interim, exploiting its limitations helps our students approach the same content working in higher cognitive domains. We can teach our students to interrogate solutions produced by AI the way they should evaluate any other source of information, including their instructor – and this critical thinking is a lesson worthy of our efforts, our expertise and our students’ tuition.

Step 2: Evolve

In addition to examining and exposing the limitations of ChatGPT as a teaching and learning tool, we need to learn how to leverage its capabilities – and how to teach this. The applications are context-specific, and enthusiastic early adopters such as Matt Miller have come up with excellent ideas that make our work easier, not harder. From these and other rich repositories, two approaches stand out to me as broadly applicable.

The first is leveraging ChatGPT as a powerful search engine and a launch pad for research and ideas. I show students how to use Chat to generate ideas for research papers. It can provide a very high-level summary of a field and help us draft a strategic search in an academic database. We experiment with asking the right questions and using the right prompts – and this is crucial: leveraging AI effectively is a craft that students need to hone.

ChatGPT can also be used to help students practise meta-cognition and learn how to learn. As apprentices in their disciplines, students rely on faculty to make their strategies and thinking for tackling content explicit, and Chat can be leveraged in this process.

For a New York Times article about ChatGPT’s educational potential, Kevin Roose interviewed eighth-grade history teacher Jon Gold. In addition to having an outstanding surname for a teacher, he was highly innovative and asked Chat to come up with multiple-choice questions about a selected news article. I love this idea, and we could take it further. We could instruct students to use Chat to come up with questions, then choose the best question and submit it to contribute to a quiz.

This models multiple crucial ideas: how and whether to use AI as a starting point for summary and review; how to actively test yourself on material, rather than stopping at passive consumption; what makes a good test question and why; what makes a test question easy or hard and why; and what aspects of material are worth learning and why.

Step 3: Elevate

ChatGPT is a catalyst that should invite us to interrogate our teaching practices – with the potential outcome of elevating our work. In parallel, we need to elevate our thinking. We need to redirect our conversations and energy, moving away from the possibility of students “cheating” on assessments that were essentially busywork in the first place and towards higher-level, complex issues surrounding the use of AI: what are the biases built into its design? What types of data is it trained on, and how were those data collected? Whose work, opinions and voices are included or considered authoritative (and how is that determined)? Whose voices are marginalised, minimised or excluded? How much will future tools cost, and will this cost be borne by students or institutions?

To confront these substantive issues around AI, our response to ChatGPT and its successors should be one that encourages open, interdisciplinary discourse and supports research investigating AI from every angle, including its ethical and social implications. In addition to forcing us to update our tired teaching practices, ChatGPT coerces us out of our specialist silos and into engaging with our colleagues in other units and disciplines: we need expertise across fields to inform our decision-making in our own practice and to examine the role of AI in higher education and in society.

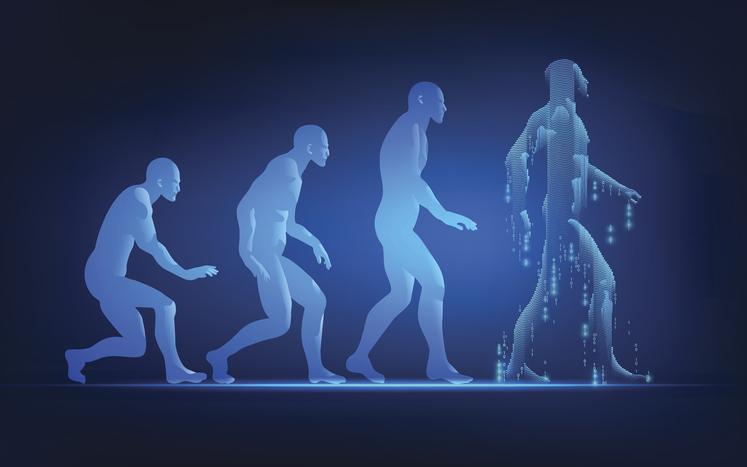

The time for wringing our collective hands about the potential for misuse of AI in higher education is over. The time for excitement around harnessing this technology to elevate higher education to something befitting its moniker is here. Institutions and individuals who refuse to adapt to a world in which AI plays a central role are in danger of becoming dinosaurs. And we know what happened to the dinosaurs: according to ChatGPT, they “went” extinct (awkward wording, Chat) approximately 65 million years ago. Maybe it’s time for many of our standard practices in higher education to suffer the same fate.

Leanne Ramer is a senior lecturer in the department of biomedical physiology and kinesiology at Simon Fraser University, Canada.

If you found this interesting and want advice and insight from academics and university staff delivered direct to your inbox each week, sign up for the Campus newsletter.