The robots are coming. Future-gazers have been making that prediction at least since Alan Turing speculated in 1950 about the possibility of a machine that could fool an interlocutor into believing that they were talking to another person.

But the imminent arrival on our roads of self-driving cars (see the article “How do we decide what is right? The ethicist’s view”, below) has brought home to many people that the kinds of artificially intelligent machines long imagined by science fiction writers and visionary scientists are finally being realised.

But what does the AI revolution mean for universities? To find out, Times Higher Education has teamed up with Microsoft to conduct a major survey of more than 100 AI experts and university leaders.

The findings include:

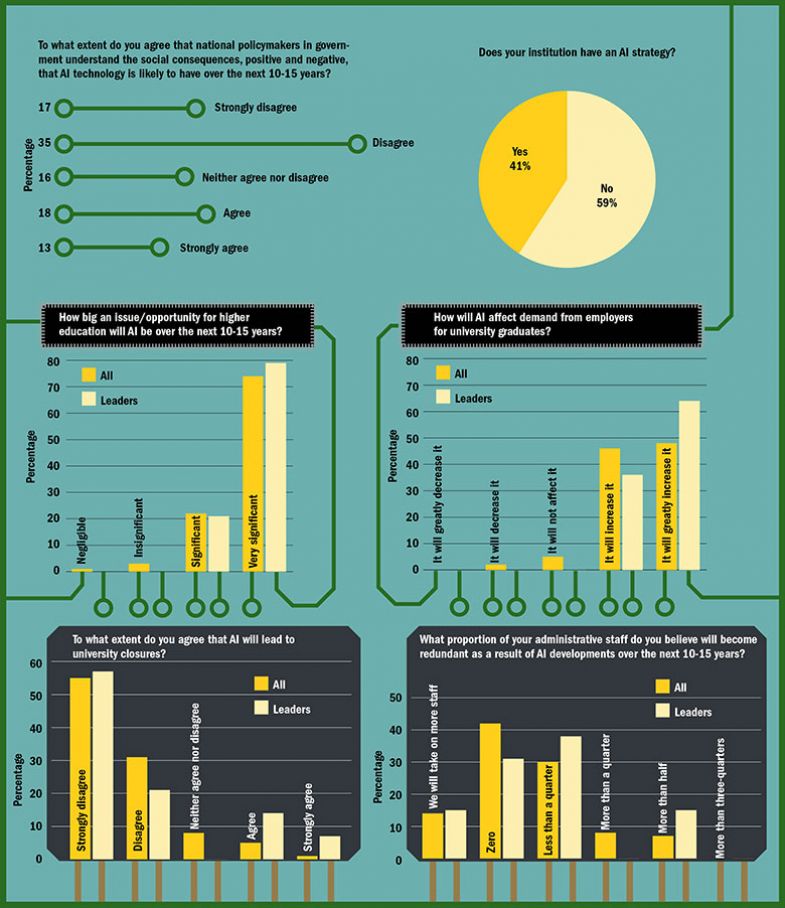

• Only a minority of universities currently have an AI strategy, but most plan to develop one

• Universities find it difficult to recruit and retain staff able to teach and research in AI

• AI will increase employers’ demand for university graduates and will not lead to university closures

• AI will be able to assess students, provide feedback and generate and test scientific hypotheses at least as well as humans can

• But universities will not cut teaching, research or administration staff and may even recruit more.

Private corporations are in a desperate race to put affordable AI machines on the market, and politicians are doing all they can to facilitate that, anxious for the enormous tax revenue that national success in this area is expected to yield – not to mention the military superiority.

Last year, for instance, Darpa, the US government’s Defense Advanced Research Projects Agency, pledged $2 billion to develop next-generation AI systems capable of “contextual reasoning”. China, the US’ great geopolitical rival, is also making huge investments , as is Europe. The UK is investing £1 billion (£300 million of it public money) in AI as part of its industrial strategy, which will include 1,000 new PhD places for those working on AI and related subjects. France and Germany are also investing in excess of £1 billion each.

And universities themselves are independently seizing the opportunities to get ahead; last year, the Massachusetts Institute of Technology announced a $1 billion commitment to establish a new college of computing, focusing on AI.

Yet there is widespread anxiety about the socio-economic consequences that this so-called fourth industrial revolution might have. The mushrooming volume of ink spilled in recent years on the topic is usually predicated on fears that many jobs – including some currently done by graduates – will be taken over by machines, potentially leading to mass unemployment. For instance, writing in the 2017 book Future Frontiers: Education for an AI world, Richard Watson, a futurist and visiting researcher at Imperial College London, questions the role of higher education in its current guise if “advanced machine learning and autonomous systems are capable of doing almost everything humans can do at a fraction of the cost”. He worries that universities are “teaching the next generation to become rapidly redundant in the face of accelerating technological change”.

Others argue that AI will create as many jobs for humans as it eliminates, but unease persists in those likely to be most affected by the changes. Of the 409 students who responded to a recent survey conducted by researchers at London’s Hult International Business School, only 31 per cent feel hopeful about the prospect of living and working with AI and automation, while 18 per cent feel mainly fear. Only 20 per cent feel confident and very prepared for what is to come.

“It was clear from the findings that universities need to do more to discuss this topic and also relieve [students’] feelings of uncertainty,” says Carina Paine Schofield, senior research fellow at Hult and co-author of the study. “[They] are the first generation for whom automation will definitely impact their working lives, yet their education system is only just beginning to wake up to the consequences of automation.”

It is with such warnings in mind that the THE-Microsoft survey was launched. What do those best positioned to give an informed view believe the consequences of the fourth industrial revolution will be for higher education – and how are universities readying themselves to respond to those changes? If AI significantly reduces the demand for human labour, will it also diminish the demand for a university education – or perhaps increase it, as desperate jobseekers bolster their CVs with ever more qualifications? And even if it does, will that translate into more jobs for academics – or will teaching and even research largely be taken over by intelligent machines, too?

The uncertainty surrounding the socio-economic effects of AI are reflected in the fact that just 31 per cent of the 111 respondents agree that national policymakers understand the social consequences that the AI technology they are funding and facilitating is likely to have over the next 10-15 years, compared with 52 per cent who disagree.

Yet, at the same time, respondents appear remarkably confident that universities and academics will remain relevant. Nearly all agree that AI will be a very big issue for higher education. And while only 41 per cent of the respondents – 80 per cent of whom are computer science academics – say that their institutions have specific AI strategies, most of those who don’t are acutely aware of the omission, and most of the university leaders among the respondents express an intention to develop strategies where they do not already have one.

Meanwhile, although only 43 per cent of respondents say that their institution has allocated internal budget for AI-related institutional projects, 78 per cent believe that their university has the right skills internally to work on such projects, and nearly three-quarters of the 15 university leaders and seven chief technology officers in the survey have drawn on internal faculty expertise in AI to plan their institutional futures.

Regarding that planning, the name of the game seems to be to prepare for ongoing expansion, rather than agonising over how to manage decline. Some 94 per cent of respondents – and all the university leaders – believe that AI will increase the demand from employers for university graduates, while only 2 per cent expect it to drop.

Accordingly, 86 per cent of respondents disagree – most of them strongly – with the suggestion that AI will lead to university closures, and 94 per cent disagree that it threatens their own universities’ futures. Contrariwise, 95 per cent see it as an opportunity.

That does not mean that work does not need to be done to realise that opportunity. Only 24 per cent of respondents agree that their university is optimally configured physically for the age of AI, compared with 35 per cent who disagree. And many see AI leading to a shake-up in the administrative roles that universities will need to cover; as well as IT, student services and admissions are expected to see the biggest changes.

Regarding student admissions, Alice Gast, president and vice-chancellor of Imperial College London, told THE’s Asia Universities Summit last year that universities will use AI to select the best candidates for degree courses, noting that Unilever is already using AI and social media to screen candidates for internships and graduate jobs.

Some respondents welcome the prospect of fewer administrators. Olena Kaikova, a senior researcher in computer science from the University of Jyväskylä in Finland, put it this way: “Who would want to do a boring routine job if it can be delegated to AI robots?”

Those whose mortgages depend on such jobs may beg to differ, of course. But perhaps they ought not to worry too much. More than half of THE’s respondents (56 per cent) – and just under half of university leaders (46 per cent) expect AI either to increase universities’ need for administrative staff or to have no effect on it over the next 10 to 15 years. Of those who expect it to lead to job cuts, the vast majority predict that those cuts will account for less than a quarter of current jobs.

For full survey results click here

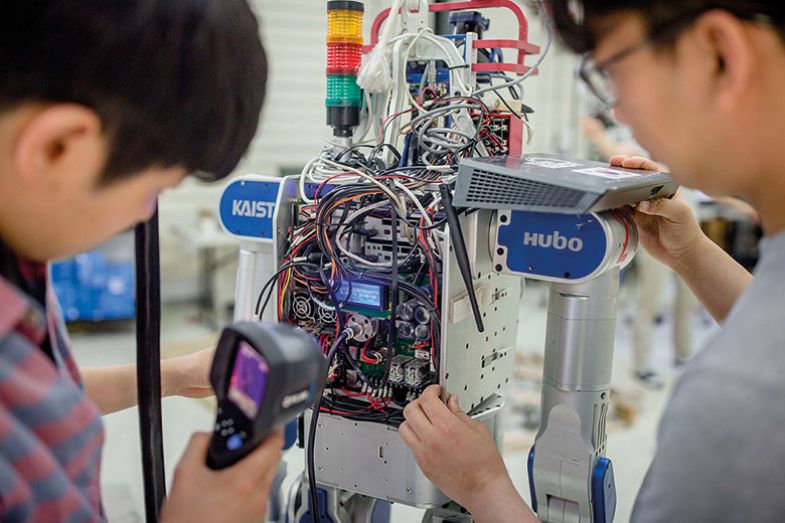

One group of people whom universities are desperate to recruit is the computer science experts. One approach is to train them in-house. For instance, the Korea Advanced Institute of Science and Technology (KAIST) has just set up a new Graduate School for AI, aimed at turning 60 students a year into what KAIST president Sung-Chul Shin calls “top-tier AI engineers”.

Shin’s ambition is to make the school one of the “top five AI schools in the world”, in terms of number of publications in the field, by 2025. It currently ranks 10th in the Computer Science Rankings run by Emery Berger, a computer science professor at the University of Massachusetts Amherst, but Shin expects that with the help of an allocated budget of 22 billion KRW (£15 million), on top of 23 billion KRW in external grants, the school will “break new ground”.

In realising this ambition, it will no doubt help that, according to Shin, KAIST’s current AI researchers are already “the cream of the crop”. But not every institution can say the same – and none can be overly confident of holding on to what they have, given the huge salaries on offer in the tech industry.

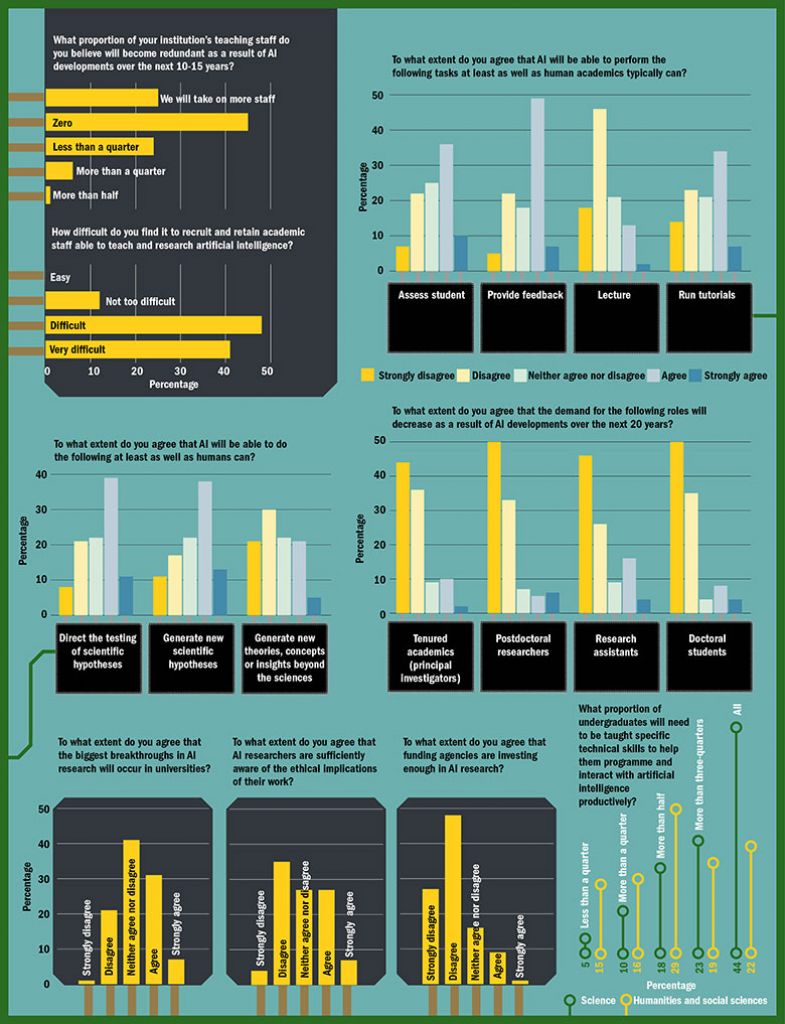

According to Karin Immergluck, executive director of Stanford University’s technology licensing office, losing existing staff to industry is “definitely becoming more of a problem in Silicon Valley – not just for Stanford but for the University of California, San Francisco and Berkeley as well”. But, regardless of their proximity to Silicon Valley, not one of THE’s respondents finds it easy to recruit and retain academic staff able to teach and research AI, and most find it “difficult” (48 per cent) or “very difficult” (41 per cent).

Frederik Heintz, a senior lecturer in computer science at Linköping University in Sweden, plumps for the latter option, explaining that “universities cannot compete in salary and other compensations with the private sector. Too much administrative overhead is another major issue.”

An Australian university leader, who asked not to be identified, agrees that “the uncertain, less-well-paid life of an academic” often compares poorly with a career in industry.

But Immergluck feels quite relaxed about the situation, depicting the migration of academics into industry as “just another form of tech transfer. The general public and industry are benefiting from the knowledge that a professor has gained over years of doing research at a university. Of course, no one likes losing their star faculty but it just is [a fact]. It’s a part of being in that kind of very interactive environment where universities and industry are collaborating very closely.”

Several respondents also highlight the fact that the brain drain has the virtue of facilitating academic collaboration with the tech world, which can be mutually beneficial. Moreover, the direction of travel is not all one way. According to Immergluck, US academics often return from a spell of “three or four years” in industry. And while they are away, “their previous university is the first one that they are going to think of when they want to form collaborations”.

Still, the pull of industry is such that although our respondents rank research as the area of university management and practice likely to be most affected by AI, they are less sure that the biggest AI research breakthroughs will occur in universities: 38 per cent believe that they will, but only 7 per cent strongly believe that, while 23 per cent disagree (the rest are unsure).

Jyväskylä’s Kaikova explains that, in her view, “universities do not have enough resources for the breakthroughs”. But it isn’t just financial resources that universities lack. Speaking at THE’s Research Excellence Summit: Asia-Pacific in Sydney earlier this year, Pascale Fung, professor of computer science and engineering at the Hong Kong University of Science and Technology, warned that one of the biggest challenges facing universities is access to the huge amounts of data needed to develop AI systems.

“Universities today cannot compete against the Googles of the world because they do not have that data. So we are actually facing the challenge of not having equal access to the raw material of our research,” she said.

The best way forward, she tells THE, would be for tech companies to share some of their data with universities in an “anonymised and randomised” way, so as to comply with data protection laws. Universities could also focus their efforts on “more specialised topics within the relevant research areas”. This would allow them to “maximise the impact of their research without being marginalised”, she explains – although it is “tricky to do and requires insight and vision”.

Many survey respondents suggest that the question of which sector will produce the biggest breakthroughs is not conducive to an either/or answer. “Fundamental research will still be done in universities, where constraints are more relaxed than in industry,” predicts Eduardo Alonso, a reader in computing at City, University of London. “On the other hand, natural competition will bring significant applied breakthroughs developed in companies,” he said.

Linköping’s Heintz agrees that universities will tend to focus on basic research, “which means that their breakthroughs will be significantly delayed compared to the applied research done by companies”. Hence, “the public perception will probably be that industry is doing most of the research, when, in fact, [it is] piggy-backing on what the universities have done for centuries”.

For Fung, it is “imperative” for universities to be more creative in their employment practices to allow their academics to hold part-time positions in industry. She says this is already happening in the US, but she fears that universities in other countries might struggle with public perceptions: “In Hong Kong, for example, our universities are publicly funded, so it is difficult to justify [giving someone] a full-time professor role while allowing that professor to also be part-time in industry. But these are the challenges we are facing, so some kind of innovative thinking needs to happen.”

Joint positions with industry could also allow universities to tap into tech firms’ enormous research budgets; 76 per cent of respondents believe that funding agencies are not currently investing enough in AI research. There is also widespread concern that not enough funding is going into researching the philosophical ethical aspects of AI (see box opposite). Asked whether they agree that AI researchers are sufficiently aware of the ethical implications of their work, only 36 per cent of our respondents agree, while 41 per cent disagree.

“There is a lot of work going on in AI by way of tech – boys’ toys and home robotics, creepy gadgets and so on – but what a lot of people are trying to look into is the ethical tone of it all,” says Sandra Leaton Gray, professor in education at UCL Institute of Education. “Unfortunately that’s a minority sport: it’s really difficult to do any humanities- and social sciences-based work on it because grants are not tailored towards it.”

Leaton Gray is part of a new specialist interest group set up within the British Educational Research Association to redress the dearth of AI research in the discipline. “Amazing projects have not been funded because they are difficult to review,” she says. “How do you go about reviewing a proposal for something that nobody really understands yet? So many of the AI and education funding proposals are arbitrarily rejected by confused reviewers with little expertise in what is quite a new field. It is imperative that we get this right, first by more enlightened grants for social science-related AI research, not just more money for the tech promising to bring in more money.”

What about AI’s impact on the way research is conducted? To what extent could AI actually take over the research process itself? Could there ever be an AI version of Alan Turing (who was recently voted the “greatest person of the 20th century” by BBC viewers, largely for his groundbreaking use of a proto-computer to break Nazi codes during the Second World War)?

Almost all respondents expect AI to have a significant or very significant effect on the way that research is conducted. Indeed, this is already happening to some extent. For instance, Lee Cronin, Regius chair of chemistry at the University of Glasgow, has been using AI bots since 2010, most recently to mix chemicals methodically and at random in the hope of discovering beneficial new reactions.

Respondents are confident that this is just the beginning. Most agree that AI will have the cognitive capacity to participate in scientific advancement, at least to some extent. Exactly half believe that AI will be able to direct the testing of scientific hypotheses at least as well as humans can, and 52 per cent think machines will be able to generate new scientific hypotheses as well as humans can. Respondents are less sure of whether AI will be able to generate new theories, concepts or insights in non-scientific disciplines, but 26 per cent believe that they will be able to.

Cronin himself, though, is more sceptical, remarking that his bots “have discovered nothing on their own, since they all have a human overlord”. For this reason, he strongly disputes the suggestion that the involvement of silicon brains will reduce the need for carbon-based researchers. “My robots are going to make boring stuff obsolete so we can focus on being creative,” he says.

And whatever their views on the potential of AI, most of our survey respondents agree that it will only complement rather than replace human scientific input; as Heintz puts it: “Humans and AI [working] together is…much more powerful than either one or the other.” The vast majority disagree with the suggestion that AI developments over the next 20 years will result in decreasing demand for humans in the lab. That view holds even for research assistants, who typically carry out the more routine tasks: just 20 per cent of respondents expect demand for them to drop, compared with 72 per cent who do not. Of the latter, 46 per cent strongly disagree with the suggestion.

Teaching staff also have little to fear from AI, our respondents predict. Nearly half (45 per cent) believe that AI will not result in any teaching staff being made redundant over the next 10 to 15 years. Meanwhile, 25 per cent expect their institutions to take on more teaching staff, with many predicting that the rise of AI will increase the demand for education from humans seeking to remain employable. Only 7 per cent of respondents think that AI will lead to more than a quarter of teaching jobs being lost, and just 1 per cent expect more than half to go.

Asked how great the impact of AI will be on curricula and pedagogy, most respondents say that it will be “significant” (56 per cent) or “very significant” (33 per cent). Respondents are reasonably confident that AI will be able to provide student feedback at least as well as humans can, with student assessment another area where AI could play a big role. But they are less confident that an AI teaching assistant could run a tutorial or, especially, give a lecture: just 15 per cent of respondents believe AI could rival a human at that task, compared with 64 per cent who disagree.

The key reason cited is that learning is stimulated by a human presence. According to Heintz, “all aspects of teaching and learning can be improved by AI-technology, but learning to a large degree is a social process, where doing it together with other people is important”. A computer scientist from the Republic of Ireland agrees: “A human knows what it’s like for a human to learn, and this will be hard to replicate for AI. Some students will always benefit from a human ‘overseer’ providing motivations/deadlines, and some will feel that they need human contact.”

But what students study may well change. As one of the students who participated in the Hult survey put it, “Students across the world will have to face the possibility that perhaps what they are dedicating their lives to studying right now…may soon become redundant.”

Unsurprisingly, computer science is the discipline whose graduates are most frequently predicted to see growing employer demand, followed by engineering, medicine and business. But making such predictions is a very imprecise art, as underlined by the fact that business is also among the disciplines forecast to be most likely to see a decline in demand for its graduates, behind languages but ahead of law.

Meanwhile, respondents are keen on the idea that not only science students but also humanities and social science students will need to be taught specific technical skills to help them programme and interact with artificial intelligence productively: 41 per cent of respondents believe that more than threequarters of the latter will need such training. But what is interesting in the Hult survey responses is the active desire among students for more courses in subjects like ethics and philosophy. There was also a sense that universities should focus on skills and subject areas where AI is less likely to have an advantage: those that require aptitudes such as complex decision making, critical thinking, gut instinct, entrepreneurship and emotional intelligence. For this reason, many observers predict that liberal arts degrees will be as much in demand as computer science courses.

In terms of teaching staff’s specific duties, Leif Azzorpardi, Strathclyde chancellor’s fellow in computer and information sciences at the University of Strathclyde, says his institution will potentially take on more people “to deliver better services” to students in collaboration with AI. “Teaching staff’s duties will certainly change from mundane tasks such as marking to engaging more with students to create unique learning experiences,” he says. However, “of course, institutes that do not embrace AI will not be as competitive, and will have to make redundancies”.

It is, of course, important to remember that university teaching and learning is not just about preparing people for the jobs market. In a separate chapter of the Future Frontiers book, Toby Walsh, Scientia professor of artificial intelligence at UNSW Sydney, stresses that “with society under a period of significant change, we will also need an informed population to navigate this future, and to demand appropriate checks and safeguards. A citizenship educated in ethics, society and civics is therefore essential.”

And most observers agree that the frequency with which people access higher education will increase: “Just as the [first] industrial revolution made it essential that universal education was provided to the young, the AI revolution will make it essential that education is provided to people at every age of their lives,” Walsh tells THE , allowing people to keep their skills up to date.

But he denies that this amounts to a call for wholesale change. “AI won’t change the ultimate mission of universities – educating people to the frontiers of our knowledge and undertaking research to expand that frontier – but it will change how that mission is delivered,” he says. “AI can help flip the classroom, personalise education and tackle the increasingly and distressingly prohibitive cost of delivering that education. Some of the skills that universities help people learn will change. But the skills that will be most in demand will tend to be old-fashioned ones, that universities used to deliver, such as analytical and creative thinking.”

This may be particularly true in the West, he predicts, where universities may see their niche in terms of “soft skills and higher ethical standards” – while the likes of South Korea and China, with their bigger research budgets, plough a more purely technological furrow.

Glasgow’s Cronin also cautions university leaders against getting carried away by what he sees as the largely unjustified hype surrounding AI. “The key problem, as ever, is that a small pool of academics have managed to push politicians to think that investing in AI research is going to change the world. I don’t think that is right,” he says.

Universities remain “the cradle of innovation and invention”, he says. “AI machine learning can never replace that until you make a totally new, self-replicating machine or life form with artificial consciousness…And that will remain firmly in the realm of science fiction for many hundreds of years.”

Help with distribution of this survey was provided by the Confederation of Laboratories for Artificial Intelligence Research in Europe.

The results of the THE-Microsoft Artificial Intelligence Survey will be discussed at THE’s Innovation & Impact Summit, held at Korea Advanced Institute of Science and Technology (KAIST) in Daejeon, South Korea from 2-4 April 2019.

How do we decide what is right? The ethicist’s view

For all the intellectual achievements of the past century, many concede that there has been little progress in solving philosophical problems.

There is no broad agreement, for example, about whether free will exists, whether the mind is more than the sum of its parts, or even whether a runaway tram should be diverted from hitting five people at the price of hitting one: the famous “trolley problem” first posed by Oxford philosopher Philippa Foot in the 1960s.

Perhaps that explains why only two or three philosophy papers are among the 30 citations in a recent Nature article, “The Moral Machine experiment”, on the actions that self-driving cars should take in the event of a dilemma resembling the trolley problem.

This is hugely important because we are rapidly entering an era in which artificial intelligence algorithms will determine who lives and who dies, not only in car accidents but also in healthcare and drone warfare. We urgently need a manual of machine ethics – but no one is quite sure how to devise one, or who should be involved.

The Nature paper assumes – drawing on an interview with former US president Barack Obama – that consensus is a critical criterion for determining a “correct” set of ethical principles for self-driving cars. But what the paper reveals is that “we” seem to agree on little other than sparing people over animals, more over fewer people and the young over the old. Drawing on survey results from 2.3 million people, it shows that there are significant differences between the intuitions of different geographical groups: “Western” people have a preference for sparing the fittest; “Eastern” people prefer to spare the law-abiding (bad news for jaywalkers); while “Southern” people (Latin Americans, among others) are inclined to spare women and those of higher status.

This shows how difficult the task of programming ethical rules into machines will be. But, to a philosopher, there is nothing revelatory about the idea that people from different cultures have different views about what is right or fair. The interesting (and, not surprisingly, unanswered) question is whether ethical preferences come from objective principles or from culture – or, rather, the extent to which culture determines individuals’ perception of moral principles. Yet while these questions are critical to the authors’ claims, they are barely discussed in the paper.

This points to the increasing disconnect between the cultures of philosophy and technology – particularly among those involved in designing machine-learning algorithms. Few people in industry care what philosophers have to say. We can talk about what truth is or is not, and political disinformation will continue. We can talk endlessly about what makes human intelligence unique, and the media will continue to claim that programmers have finally developed a machine able to think in a way that actually resembles human intelligence. And we can say over and over again that there isn’t really a good answer to the trolley problem, but self-driving cars will appear on the roads regardless.

Industry no doubt sees enormous surveys probing moral consensus, such as the one in the Nature paper, as the key to programming driverless cars. But what if consensus isn’t the right way to go at all? What if machinery should be programmed to strictly adhere to, say, utilitarian principles?

It may be that a good start to a solution lies in education. A slightly less recent Nature viewpoint suggests that the “philosophy” part of the doctorate of philosophy should be beefed up. The article was written by the director of the promising R3 initiative at Johns Hopkins University, which aims to promote “rigour, responsibility and reproducibility” in scientific practice. Interdisciplinary understanding, with a particular focus on philosophy, may help to improve these three Rs in so far as if researchers are trained to question the foundations of the scientific method, their scientific reasoning is likely to be more robust.

Moreover, their sensitivity to the pressing ethical questions that emerging technologies pose will be much more acute – even if the answers remain difficult to determine.

Jonathan R. Goodman is a fellow of the Institute of Global Health Innovation at Imperial College London, and a doctoral student at the University of Cambridge’s Centre for Human Evolutionary Studies.

‘Swimming in too much information’: The naysayer’s view

The “tech tsunami” that is already engulfing lower-skilled jobs has not triggered a mass ascent to the higher ground of advanced education, according to social theorist Anthony Elliott. And the idea that continuous retraining could help people ride the wave of technological disruption is “wildly optimistic”, he adds.

In his new book The Culture of AI , Elliott – dean of external engagement, professor of sociology and executive director of the Hawke EU Jean Monnet Centre of Excellence and Network at the University of South Australia – argues that while apocalyptic fears of a future dominated by cyborgs and killer robots are missing the point, the prospect of mass unemployment is very real.

According to Elliott, the AI revolution is already upon us. It is acting alongside associated trends – including accelerated automation, big data, 3D printing, cloud computing, Industry 4.0 and the “internet of everything” – to reshape everyday life in pervasive but often “mundane” ways.

Claims that education can keep pace with all this change are ill-conceived, he writes. “The automation of many lower-skilled jobs has not necessarily produced more opportunities for advancing education levels or retraining, and recent evidence indicates that the idea of continuous retraining is optimistic at best.”

In support of that view he cites the fact that unemployment in European OECD countries rose from 2.6 per cent in 1970 to nearly 11 per cent in the mid-1990s despite continuous efforts to retrain those affected by the introduction of first-generation robots on production lines and elsewhere. He also notes that there has been a decline in demand for skilled workers since 2000 even as the supply has increased, resulting in more highly skilled workers replacing lesser skilled workers lower down the occupational ladder, worsening the plight of the unskilled.

Analyses in the UK, US, Japan and Australia have all concluded that 40 to 50 per cent of jobs will disappear within the next 15 to 30 years, Elliott tells Times Higher Education: “If those figures are only half right, trying to reskill people to keep up with that level of change ain’t going to cut it,” he says.

Lifelong learning has value “in and of itself”, Elliott concedes, because “it’s an individual, social and public good to have an informed citizenry”. But it should not be viewed as “an insurance policy against this tech tsunami”.

His book criticises the notion that workers dislodged from largely routine and predictable forms of employment can reinvent themselves by acquiring digital skills in “a kind of relentless self-fashioning…to update talents for the jobs of the moment. The truth, at least for millions of average workers around the globe, is that technology often results in significant deskilling.”

For Elliott, the dogma of continuous retraining “smacks of a particularly Western individualist orientation – faster, quicker, leaner, more self-actualising. It’s very much a privatisation of a public problem [where] individuals lift themselves up by their own bootstraps and get on with the work of being more economically productive.” But, in his view, people need help in navigating the brave new digital world at a more basic level.

“We’ve entered an age of big data: we’re swimming in too much information,” he says. “It’s people that have to do the work to integrate all this data into their lives, their work structures, the way they do things. That’s where we need the public discussion about AI.”

John Ross

POSTSCRIPT:

Print headline: HAL, et al

Register to continue

Why register?

- Registration is free and only takes a moment

- Once registered, you can read 3 articles a month

- Sign up for our newsletter

Subscribe

Or subscribe for unlimited access to:

- Unlimited access to news, views, insights & reviews

- Digital editions

- Digital access to THE’s university and college rankings analysis

Already registered or a current subscriber?