The current research excellence framework is “a bloated boondoggle” that “steals years, and possibly centuries, of staff time that could be put to better use, and includes so many outcome measures that every university can cherry-pick its way to appearing ‘top-ranking’ ”.

This is the view of Chris Chambers, professor of cognitive neuroscience at Cardiff University, and it is by no means unique.

So what if much of that labour could be replaced by automated metrics? Academics’ conviction that peer review is the gold standard of research assessment means that evaluating the viability of a replacement scheme hinges on how closely metrics could reproduce the conclusions reached by humans. The debate was invigorated in 2013 when Dorothy Bishop, professor of developmental neuropsychology at the University of Oxford, argued in a widely read blog that a psychology department’s h‑index – a measure of the volume and citation performance of its papers – during the assessment period of the 2008 research assessment exercise was a good predictor of how much quality-related funding it received based on its results. But the suggestion that this might also be true in other disciplines was dealt a blow earlier this year when a team of physicists found that departmental h-index failed utterly to predict the REF results.

Times Higher Education asked Elsevier, owner of the Scopus database and provider of citation data for our World University Rankings, to carry out its own analysis of correlations between citations data and quality scores in the REF.

The analysts reasoned that examining the metrics of only the papers submitted to the REF would fail to demonstrate the value of replacing the REF with an exercise in which university departments would be relieved of the labour of selecting which papers to submit.

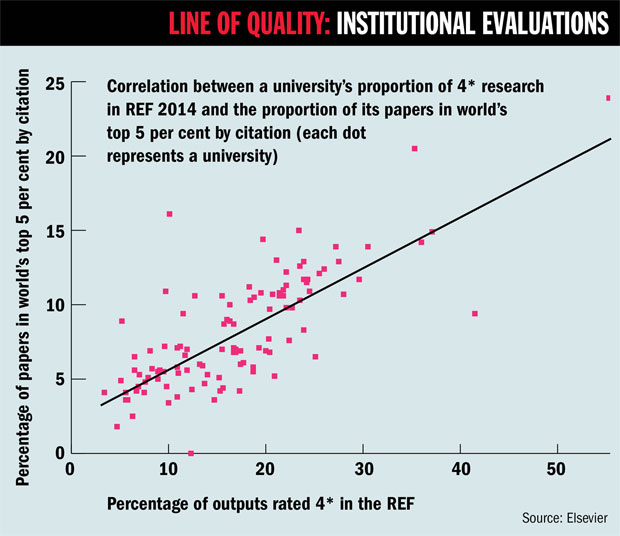

So instead they examined the proportion of an institution’s entire output over the REF assessment period (2008 to 2013) that fell into the top 5 per cent of all articles by citation produced during that period by the fields covered by the relevant unit of assessment (papers were credited to the institution where they were produced even when the author had moved before the census date).

This figure was compared with the proportion of the institution’s outputs that were judged to be 4* (“world-leading”) in the REF. They found a reasonable “coefficient of determination” of 0.59, where 1 is a perfect linear relationship. However, as the graph below shows, there are plenty of outliers.

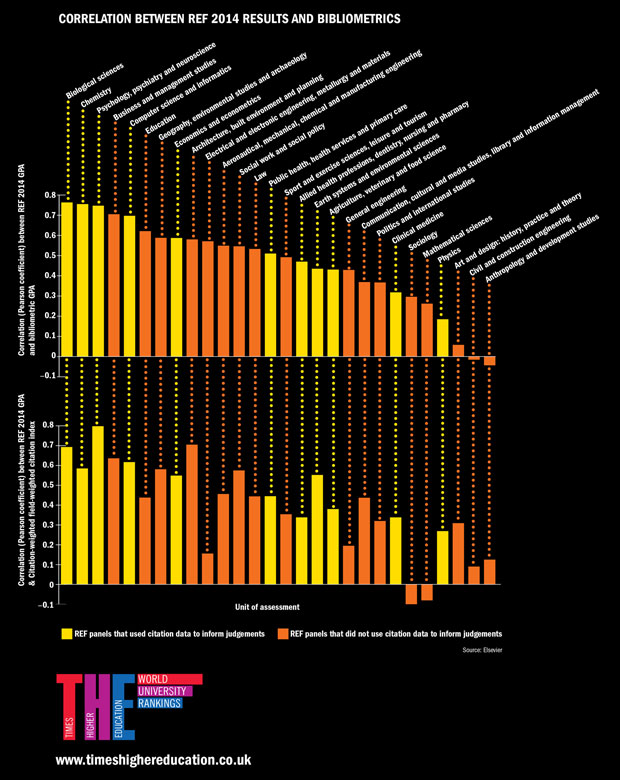

The analysts then looked in more detail at correlations at subject level. For the 28 units of assessment with reasonable coverage on Scopus (most arts and humanities subjects currently do not fall into this bracket), they created a “bibliometrics grade point average” based on the proportion of each department’s total articles whose citation count fell within the top 5, 10, 25 and 50 per cent globally, and compared this with GPAs calculated in the standard way from scores in the outputs section of the REF (which accounted for 65 per cent of the total REF scores). As the graph at the end of this article shows, correlations varied from 0.76 in biological sciences to −0.04 in anthropology and development studies, where 1 is a perfect correlation and −1 is a perfect inverse correlation.

The analysts also looked at how REF GPA correlated with the “field-weighted citation impact” of each department’s papers over the assessment period. This is a widely used metric that accounts for differences in citation patterns between papers of different types, scopes and ages. The findings are similar to those generated from the bibliometrics GPA.

In both cases, correlations tend to be higher for the units of assessment (coloured yellow in the graph) whose assessment panels used citation data to inform their judgements – although some subjects using citations, such as physics, have low correlations, while others that did not use citations, such as business and management, have high ones.

Robert Bowman, director of the Centre for Nanostructured Media at Queen’s University Belfast, said that this reassured him that the physics assessment panel “likely read outputs and submissions and weren’t swayed by metrics alone”.

The conclusion of Nick Fowler, managing director of research management at Elsevier, is that metrics could be useful to “inform” REF evaluations in the units of assessment with the highest correlations, where citations “could to some extent predict results”.

But Ralph Kenna, professor of theoretical physics at Coventry University and one of the authors of the h‑index paper, said that this claim was “way too strong” because even overall correlations in excess of 0.7 could still disguise significant outliers, resulting in “misrepresentation of an individual unit’s place in the (already extremely dodgy) rankings and inflation or damage to its reputation”.

He said that in his study, which came up with roughly similar correlations to Elsevier’s, one submission was ranked 27th, compared with its seventh place in the REF. For this reason, Elsevier’s analysis was “another nail in the coffin for the idea of replacing REF by metrics”.

However, Professor Chambers disagreed. For him, the analysis demonstrates that “a simple metric can predict REF outcomes”. But a “smarter and cheaper” REF would need to be based on several metrics to prevent gaming by universities.

“Perhaps the real test of the UK’s research excellence is whether the collective minds of thousands of academics can generate an intelligent automated system of metrics to replace the current failed model,” he said.

POSTSCRIPT:

Article originally published as: Can metrics really replace reviewers in REF? (18 June 2015)

Register to continue

Why register?

- Registration is free and only takes a moment

- Once registered, you can read 3 articles a month

- Sign up for our newsletter

Subscribe

Or subscribe for unlimited access to:

- Unlimited access to news, views, insights & reviews

- Digital editions

- Digital access to THE’s university and college rankings analysis

Already registered or a current subscriber?